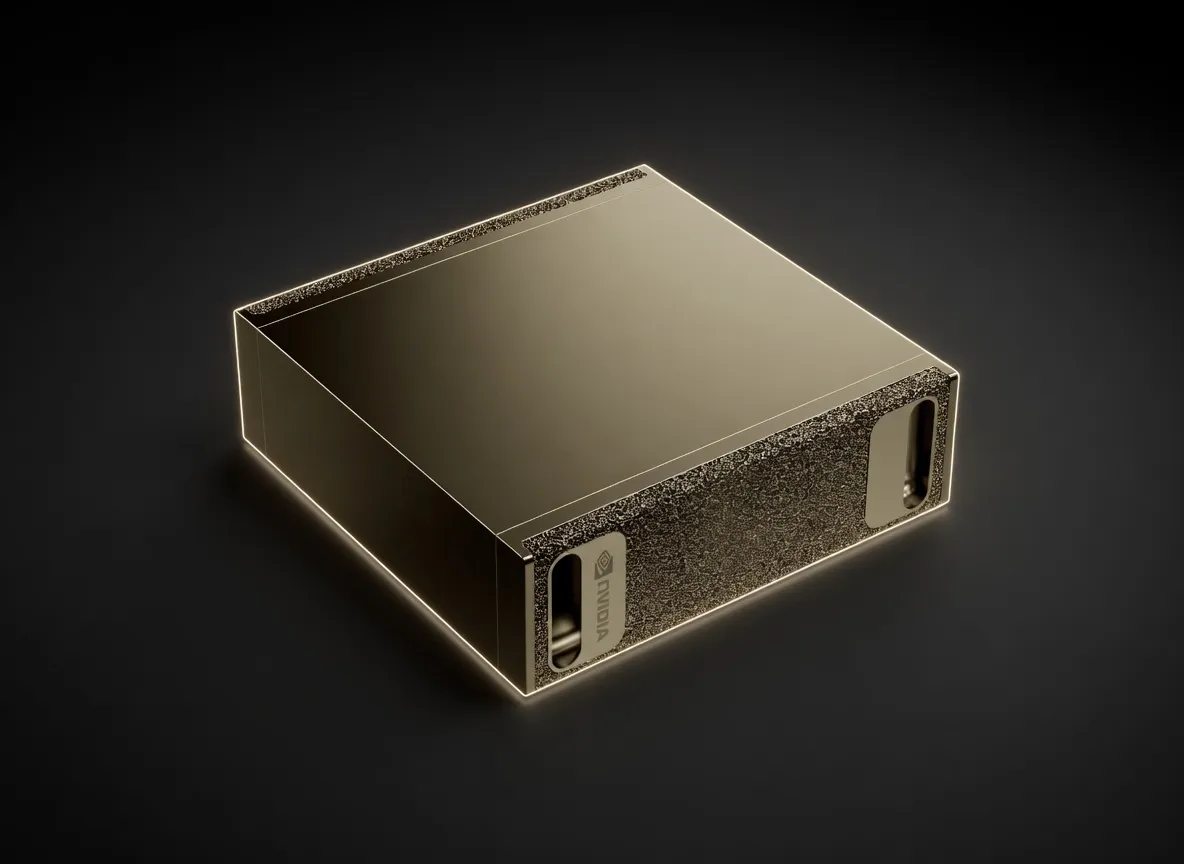

NVIDIA's reference architecture powered by the GB10 Grace Blackwell Superchip, delivering up to 1 PFLOP of FP4 AI performance in a compact, edge-ready form factor.

The NVIDIA DGX Spark represents a fundamental shift in local AI development, moving the Blackwell architecture out of the data center and onto the engineer's desk. It is a reference architecture designed for practitioners who require massive VRAM and high-throughput inference without the latency or privacy concerns of cloud-based providers.

At an MSRP of $4,699, the DGX Spark is positioned as a premium AI workstation for edge deployments and high-end local development. It bridges the gap between consumer-grade RTX 4090 builds—which are limited by PCIe lanes and VRAM capacity—and full-scale DGX H100 racks. For teams building agentic workflows or deploying local LLMs, the Spark provides the necessary headroom to run frontier-class models in a silent, 140W TDP form factor that measures only 150mm squared.

The core of the DGX Spark is the GB10 Grace Blackwell Superchip. While consumer hardware often prioritizes rasterization, the Spark is optimized for tensor operations and high-speed memory access.

The 128GB VRAM capacity is the defining feature of the DGX Spark, making it one of the few "single-chip" solutions capable of running 200B+ parameter models locally.

The 128GB buffer is particularly valuable for long-context window operations. Engineers can run Llama 3.1 with a 128k context window without running out of memory, a task that frequently crashes 24GB or 48GB consumer cards. It also handles multimodal models like Llava-v1.6 and CogVLM with ease, providing enough VRAM to process high-resolution image embeddings alongside large text prompts.

For practitioners, the best quality-to-speed tradeoff on this hardware is NVFP4. Utilizing the Blackwell-native FP4 support allows for 2.5x performance gains over launch-day benchmarks, enabling models that previously required 256GB of VRAM to run within the Spark's 128GB footprint.

The DGX Spark is not a gaming machine; it is a dedicated AI appliance for specific professional workflows.

When evaluating the DGX Spark, practitioners typically look at two alternatives: the Apple Mac Studio (M2/M3 Ultra) and Custom Multi-GPU Workstations.

The Mac Studio offers more total unified memory (up to 192GB), but the DGX Spark wins on software ecosystem and raw AI throughput. The Spark’s Blackwell architecture supports FP4 and FP8 hardware acceleration, which Apple's Silicon currently lacks. Furthermore, the Spark’s inclusion of ConnectX-7 200Gbps networking makes it a "cluster-ready" device, whereas the Mac is a standalone workstation. If you rely on the NVIDIA AI stack (CUDA, TensorRT), the Spark is the clear choice.

A dual RTX 6000 Ada setup provides 96GB of VRAM and higher raw TFLOPS, but at more than double the price (~$14,000) and significantly higher power draw (600W+). The DGX Spark offers more VRAM (128GB) in a smaller, more efficient package for roughly a third of the cost. For inference-heavy workloads where 128GB is the "magic number" for model weights, the Spark provides better value.

Choose the DGX Spark if your primary bottleneck is VRAM capacity and physical space. It is currently the most power-efficient way to run 200B parameter models at the edge, offering a "plug-and-play" experience for the modern AI engineer.

Qwen3-30B-A3BAlibaba Cloud (Qwen) | 30B(3B active) | SS | 40.8 tok/s | 5.4 GB | |

| 8B | AA | 38.8 tok/s | 5.7 GB | ||

| 9B | AA | 36.5 tok/s | 6.0 GB | ||

Llama 2 7B ChatMeta | 7B | AA | 45.9 tok/s | 4.8 GB | |

Gemma 4 E2B ITGoogle | 2B | AA | 59.3 tok/s | 3.7 GB | |

Qwen3.6 35B-A3BAlibaba Cloud | 35B(3B active) | AA | 25.8 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba Cloud (Qwen) | 35B(3B active) | AA | 25.8 tok/s | 8.5 GB | |

Mistral 7B InstructMistral AI | 7B | AA | 34.4 tok/s | 6.4 GB | |

Llama 2 13B ChatMeta | 13B | AA | 26.0 tok/s | 8.5 GB | |

Gemma 4 E4B ITGoogle | 4B | AA | 31.8 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | AA | 31.8 tok/s | 6.9 GB | |

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | BB | 19.3 tok/s | 11.4 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | BB | 20.0 tok/s | 11.0 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | BB | 3.3 tok/s | 66.3 GB | |

GLM-4.6Z.ai | 355B(32B active) | BB | 3.1 tok/s | 70.3 GB | |

DeepSeek-V3DeepSeek | 671B(37B active) | BB | 3.7 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | BB | 3.7 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | BB | 3.7 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | BB | 3.7 tok/s | 59.8 GB | |

Kimi K2 Instruct 0905Moonshot AI | 1000B(32B active) | BB | 2.6 tok/s | 84.6 GB | |

Kimi K2 ThinkingMoonshot AI | 1000B(32B active) | BB | 2.6 tok/s | 84.6 GB | |

Kimi K2.5Moonshot AI | 1000B(32B active) | BB | 2.6 tok/s | 84.6 GB | |

GLM-5Z.ai | 744B(40B active) | BB | 2.5 tok/s | 87.7 GB | |

GLM-5.1Z.ai | 744B(40B active) | BB | 2.5 tok/s | 87.7 GB | |

Kimi K2.6Moonshot AI | 1000B(32B active) | BB | 2.6 tok/s | 86.2 GB |