Google's seventh-generation Tensor Processing Unit, delivering 4,614 FP8 TFLOPS per chip with 192 GB HBM3e. Scales to 9,216-chip superpods producing 42.5 FP8 EFLOPS. Purpose-built for large-scale AI training and inference. Cloud-only via Google Cloud Platform.

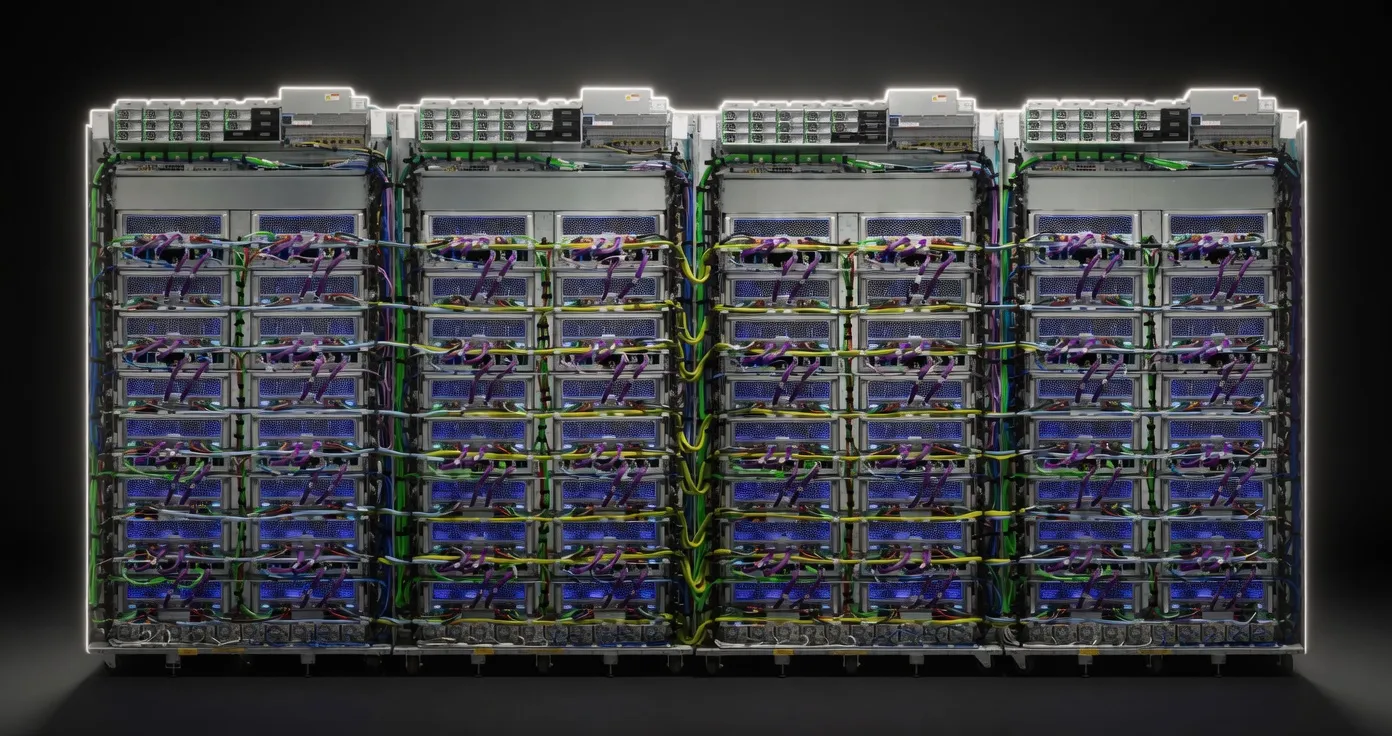

Google TPU v7 (Ironwood) is the seventh-generation Tensor Processing Unit, built exclusively for inference at data-center scale. Unlike earlier TPUs that split their focus between training and serving, Ironwood is architected for one job: turn a 70-billion-parameter model into a low-latency API endpoint. You can’t buy the chip—Google keeps every wafer in-house—but you can rent slices of 4 to 9,216 chips through Google Cloud Platform. In practice, that means 192 GB HBM3e and 4.6 FP8 PFLOPS per socket, liquid-cooled, wired into a 3-D torus that pushes 9.6 Tbps chip-to-chip. If your bottleneck is “how many concurrent users can hit Llama 3.1 70 B before the P99 latency explodes,” Ironwood is the closest thing to a turnkey answer.

The competitive set is thin: NVIDIA H100/H200/B100 for GPU-centric shops, AWS Inferentia2 for cost-at-all-costs, and AMD MI300X if you want 192 GB on a PCIe card. Ironwood’s pitch is simple—higher memory bandwidth (7.4 TB/s vs. 4.8 TB/s on H100 SXM) and 2× the perf/Watt of the prior-gen Trillium—so Google can offer lower per-token cost while keeping the QoS bar high.

Per-chip numbers that matter:

Pod-scale ceiling:

Quantisation impact: FP8 is native; BF16 and INT8 paths are one compiler flag away. Google’s XLA compiler automatically fuses MoE top-k routing into the sparse cores, so Mixtral-8×22B runs at the same per-token energy as a dense 7 B model.

VRAM rule of thumb: 1 byte/parameter in FP8, 0.5 bytes in 4-bit, 0.25 bytes in 2-bit. With 192 GB you get:

Sweet spot: 4-bit weight-only quant on 70 B class models keeps quality within 0.3% of BF16 on MMLU while doubling throughput. Long-context jobs (≥200 k) stay in HBM without paging, so latency variance drops to sub-millisecond.

You choose Ironwood when your daily bill is measured in billions of tokens, not dollars per hour.

Not for you if you need on-prem or edge: Ironwood never leaves Google’s liquid-cooled halls. If you want a 192 GB card in your own box, look at AMD MI300X or the upcoming NVIDIA B100.

NVIDIA H100 80 GB SXM

AWS Inferentia2 (Trn1)

Bottom line: Ironwood is the fastest path to serve the largest open-weight models today, provided you’re willing to live inside Google’s cloud.

| 70B | SS | 52.7 tok/s | 112.8 GB | ||

| 70B | SS | 52.7 tok/s | 112.8 GB | ||

Nvidia Nemotron 3 SuperNVIDIA | 120B(12B active) | SS | 57.4 tok/s | 103.5 GB | |

DeepSeek-V4-FlashDeepSeek | 284B(13B active) | SS | 53.0 tok/s | 112.0 GB | |

Llama 4 MaverickMeta | 400B(17B active) | SS | 40.6 tok/s | 146.4 GB | |

GLM-5Z.ai | 744B(40B active) | SS | 67.7 tok/s | 87.7 GB | |

GLM-5.1Z.ai | 744B(40B active) | SS | 67.7 tok/s | 87.7 GB | |

Kimi K2.6Moonshot AI | 1000B(32B active) | SS | 68.9 tok/s | 86.2 GB | |

Kimi K2 Instruct 0905Moonshot AI | 1000B(32B active) | SS | 70.2 tok/s | 84.6 GB | |

Kimi K2 ThinkingMoonshot AI | 1000B(32B active) | SS | 70.2 tok/s | 84.6 GB | |

Kimi K2.5Moonshot AI | 1000B(32B active) | SS | 70.2 tok/s | 84.6 GB | |

Falcon 180BTechnology Innovation Institute | 180B | SS | 55.1 tok/s | 107.8 GB | |

GLM-4.6Z.ai | 355B(32B active) | SS | 84.6 tok/s | 70.3 GB | |

Gemma 4 31B ITGoogle | 31B | SS | 72.5 tok/s | 82.0 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 89.7 tok/s | 66.3 GB | |

Qwen3.6-27BAlibaba Cloud | 27B | SS | 81.6 tok/s | 72.8 GB | |

Qwen3.5-27BAlibaba Cloud (Qwen) | 27B | SS | 81.6 tok/s | 72.8 GB | |

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 99.3 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 99.3 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 99.3 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 99.3 tok/s | 59.8 GB | |

GLM-4.7Z.ai | 358B(32B active) | SS | 112.9 tok/s | 52.6 GB | |

GLM-4.5Z.ai | 355B(32B active) | SS | 114.6 tok/s | 51.8 GB | |

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 114.6 tok/s | 51.8 GB | |

Qwen3.5-397B-A17BAlibaba Cloud (Qwen) | 397B(17B active) | SS | 129.1 tok/s | 46.0 GB |