Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

Google's cost-efficient AI accelerator optimized for inference and medium-scale training. Available via Google Cloud with 16GB HBM2e per chip in pods up to 256 chips.

Good balance for indie developers running local copilots and chat. 30B+ models are reachable but only with aggressive quantization and short context.

Generated from this product’s spec sheet. Editor reviews refine it over time.

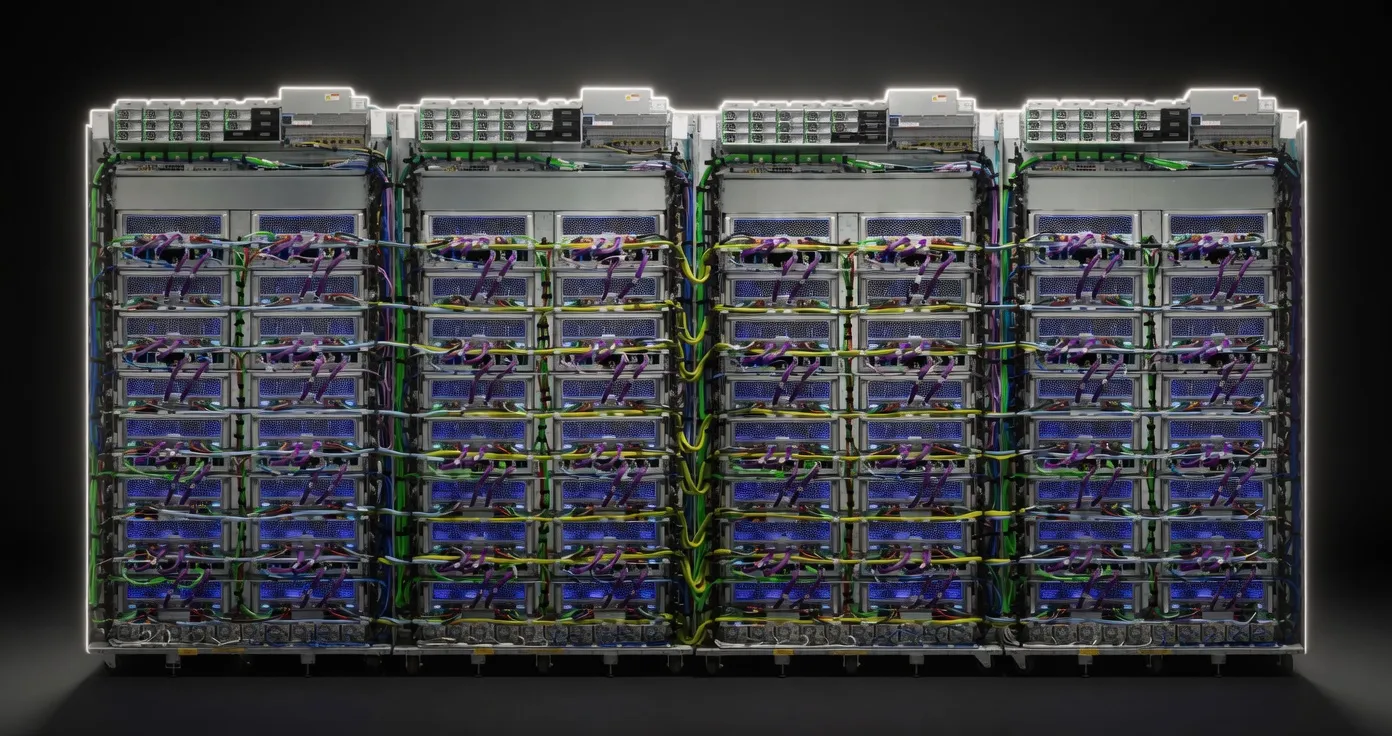

The Google Cloud TPU v5e represents a strategic shift in Google’s AI hardware roadmap, prioritizing cost-efficiency and versatility over the raw, high-end power of the flagship TPU v5p. While the "e" stands for "efficiency," this is a purpose-built data center accelerator designed to bridge the gap between expensive flagship training clusters and the high-latency performance of general-purpose GPUs. For developers and engineers building on Google Cloud, the v5e is the primary vehicle for high-throughput inference and fine-tuning of modern transformer architectures.

Unlike consumer hardware or local workstations, the TPU v5e is accessible exclusively via Google Cloud Platform (GCP). It is engineered for the "sweet spot" of the current AI market: serving Large Language Models (LLMs) and diffusion models with high efficiency. While it competes directly with NVIDIA’s L4 and A100 (40GB) offerings in the cloud, its tight integration with the JAX, PyTorch, and TensorFlow ecosystems via the OpenXLA compiler makes it a formidable choice for teams already invested in the Google Cloud ecosystem.

The technical profile of the TPU v5e is defined by its balance of compute density and interconnect speed. Each chip provides 197 TFLOPS of BF16/FP16 performance and 394 TOPS of INT8 performance, making it highly effective for quantized inference workloads.

The 16GB of HBM2e memory per chip is the critical constraint for practitioners to understand. At first glance, 16GB may seem limiting for Large Language Models compared to an H100 or even a consumer RTX 3090/4090. However, the TPU v5e is rarely used in isolation. The Inter-Chip Interconnect (ICI) allows these chips to be networked into pods of up to 256 chips, creating a massive, unified pool of VRAM.

With a memory bandwidth of 819 GB/s, the v5e outperforms the NVIDIA A100 (40GB) in terms of bandwidth-to-VRAM ratio. This is a crucial metric for AI inference performance, as token generation is frequently memory-bandwidth bound. High bandwidth ensures that the weights of a model can be moved into the compute units quickly enough to maintain high tokens per second (TPS).

The TPU v5e is built on a custom process node (estimated 5nm-class) and is designed for high energy efficiency. Compared to the previous generation TPU v4, the v5e offers up to 2.5x better performance per dollar for inference. This makes it the preferred hardware for running large-scale agentic workflows where cost-per-million-tokens is the primary KPI.

The capability of the Google Cloud TPU v5e for AI inference depends heavily on the deployment configuration (single chip vs. multi-chip pod) and the level of quantization.

A single TPU v5e chip is ideal for "small" LLMs and vision models:

To run larger models, you must utilize the TPU v5e's pod architecture. Because the ICI interconnect provides low-latency communication between chips, the v5e can handle:

For practitioners, the "sweet spot" on v5e is often INT8 quantization. With 394 TOPS available, INT8 allows you to double your effective memory capacity and significantly increase throughput without meaningful loss in model perplexity. While Google Cloud TPU v5e VRAM for large language models is 16GB per chip, the 819 GB/s bandwidth ensures that even at higher bit-depths, the latency remains competitive with dedicated 16GB GPUs like the NVIDIA L4.

The TPU v5e is not a "local LLM" solution in the traditional sense of hardware living under a desk. It is a tool for developers who need to scale local development into production environments.

If you are moving from a prototype on a local RTX 4090 to a production-grade inference server, the v5e is a logical step. It provides the reliability of enterprise-grade hardware with the flexibility to scale from a single chip to a 256-chip pod as your traffic grows.

Agentic workflows often require multiple concurrent model calls (e.g., a planner model, a tool-use model, and a summarizer). The cost-efficiency of the v5e makes it one of the best hardware options for running these complex, high-token-volume loops in 2025.

While the TPU v5p is better for pre-training from scratch, the v5e is the workhorse for fine-tuning. Whether you are performing LoRA (Low-Rank Adaptation) on Llama 3 or full-parameter fine-tuning on smaller models, the v5e’s interconnect allows for efficient data parallelism and model parallelism.

When evaluating the Google Cloud TPU v5e for AI development, it is most frequently compared to NVIDIA’s data center offerings.

The NVIDIA L4 (24GB GDDR6) is the closest competitor in terms of cloud availability and target workload.

The A100 is a higher-tier chip, but the v5e is often chosen for its superior price-to-performance ratio.

The Google Cloud TPU v5e is the best AI chip for local deployment teams that are ready to migrate to the cloud. It offers the most cost-effective path for serving 7B to 70B parameter models at scale. If your workload can benefit from INT8 quantization and you are comfortable within the XLA ecosystem, the v5e provides a price-performance ratio that is difficult to beat with current-generation NVIDIA cloud instances.

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | SS | 58.0 tok/s | 11.4 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | SS | 59.9 tok/s | 11.0 GB | |

Qwen3.6 35B-A3BAlibaba | 35B(3B active) | SS | 77.3 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba | 35B(3B active) | SS | 77.3 tok/s | 8.5 GB | |

Llama 2 13B ChatMeta | 13B | SS | 77.9 tok/s | 8.5 GB | |

Qwen3-30B-A3BAlibaba | 30B(3B active) | SS | 122.4 tok/s | 5.4 GB | |

| 9B | SS | 109.6 tok/s | 6.0 GB | ||

| 8B | SS | 116.4 tok/s | 5.7 GB | ||

| Ad | |||||

Gemma 4 E4B ITGoogle | 4B | SS | 95.3 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | SS | 95.3 tok/s | 6.9 GB | |

Mistral 7B InstructMistral AI | 7B | SS | 103.1 tok/s | 6.4 GB | |

Llama 2 7B ChatMeta | 7B | AA | 137.7 tok/s | 4.8 GB | |

| 8B | AA | 49.5 tok/s | 13.3 GB | ||

Gemma 4 E2B ITGoogle | 2B | AA | 177.8 tok/s | 3.7 GB | |

Qwen3.5-9BAlibaba | 9B | FF | 26.8 tok/s | 24.6 GB | |

Mistral Small 3 24BMistral AI | 24B | FF | 16.9 tok/s | 39.0 GB | |

| Ad | |||||

Qwen3.6-27BAlibaba | 27B | FF | 9.1 tok/s | 72.8 GB | |

Gemma 3 27B ITGoogle | 27B | FF | 15.0 tok/s | 43.8 GB | |

Qwen3.5-27BAlibaba | 27B | FF | 9.1 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | FF | 8.0 tok/s | 82.0 GB | |

Qwen3-32BAlibaba | 32.8B | FF | 12.2 tok/s | 53.9 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | FF | 27.1 tok/s | 24.4 GB | |

LLaMA 65BMeta | 65B | FF | 16.8 tok/s | 39.3 GB | |

Llama 2 70B ChatMeta | 70B | FF | 15.2 tok/s | 43.4 GB | |

| Ad | |||||

| 70B | FF | 14.4 tok/s | 45.7 GB | ||