Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

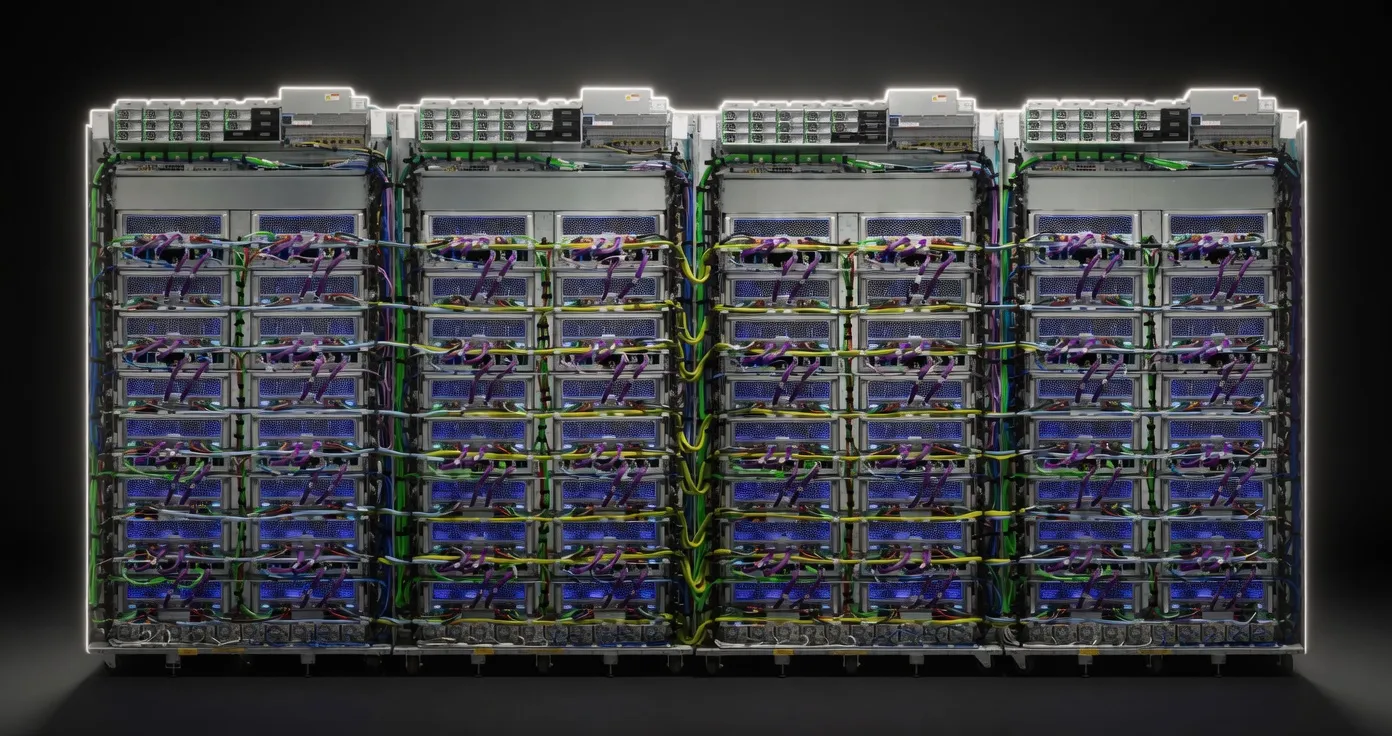

Google's high-performance TPU for large-scale training, with 2x the FLOPS and memory of v5e. Available in pods up to 8,960 chips for frontier model training.

Sized for production serving of 70B–200B class models at full or lightly-quantized precision. Overkill for a homelab; right call when the workload pays for itself in token volume.

Generated from this product’s spec sheet. Editor reviews refine it over time.

The Google Cloud TPU v5p represents Google’s most powerful purpose-built AI accelerator to date. Designed specifically for training and serving frontier-scale models, the v5p is a significant leap over the efficiency-focused v5e. While the v5e was built for cost-effectiveness, the v5p is engineered for raw performance, offering 2x the FLOPS and double the memory capacity.

For engineers and researchers, the Google Cloud TPU v5p is the primary alternative to the NVIDIA H100/H200 ecosystem. In the context of Google Cloud TPU v5p for AI, this hardware is not a "local" chip in the traditional desktop sense—you cannot buy one for a workstation. However, for teams building agentic workflows or deploying large-scale inference servers, it serves as the backbone for "local" private cloud deployments within the Google Cloud Platform (GCP) ecosystem. It is the premier choice for organizations that need to scale beyond single-node constraints into massive pods of up to 8,960 chips.

The technical profile of the TPU v5p is defined by its massive memory ceiling and interconnect throughput. When evaluating Google Cloud TPU v5p VRAM for large language models, the 95GB of HBM2e per chip is the standout metric. This puts it in direct competition with the NVIDIA H100 (80GB) and H200 (141GB).

In AI workloads, memory bandwidth is often the primary bottleneck for inference, specifically during the auto-regressive decoding phase (token generation). At 2765 GB/s, the v5p ensures that even the largest weights are moved to the compute units fast enough to maintain high Google Cloud TPU v5p tokens per second counts. Furthermore, the 4,800 GB/s interconnect bandwidth is critical for "Frontier-scale via pods" parameter models, where model parallelism is required to split a single model across hundreds or thousands of chips.

The 95GB VRAM capacity changes the math for model deployment. While many consider a 95GB GPU for AI to be the "sweet spot" for 70B parameter models, the TPU v5p goes much further by utilizing high-speed pod interconnects.

While many practitioners look for the best AI chip for local deployment using 4-bit quantization (GGUF/EXL2), the TPU v5p is designed for higher precision. Most users will run models in BF16 or INT8. The 918 TOPS of INT8 performance allows for massive throughput increases when using quantized weights for production-grade inference. For long-context tasks (128k+ tokens), the 95GB of VRAM allows for larger KV caches, reducing the need for aggressive quantization that might degrade model "reasoning" capabilities.

The Google Cloud TPU v5p is not for "local LLM" hobbyists running a single workstation in a home office. It is designed for Google google tpus for AI development at the enterprise and research level.

For those building hardware for running Frontier-scale via pods parameter models, the v5p is the gold standard. Agentic workflows often require multiple model calls in parallel (planning, tool use, reflection). The v5p’s ability to be partitioned into "slices" allows teams to run several high-performance models on a single interconnect fabric, minimizing the latency between agent steps.

If your application requires serving thousands of users simultaneously, the Google Cloud TPU v5p AI inference performance scales better than almost any other hardware. The Optical Circuit Switching (OCS) allows you to reconfigure your pod topology on the fly, optimizing for either latency (small batches) or throughput (large batches).

While inference is a strong suit, the "p" in v5p stands for Performance, specifically aimed at training. If you are fine-tuning a Llama 3.1 70B model on a proprietary dataset, the v5p provides a more seamless scaling path than traditional GPU clusters, thanks to the integrated XLA (Accelerated Linear Algebra) compiler that optimizes graph execution.

When choosing the best hardware for local AI agents 2025, you must decide between the NVIDIA ecosystem and Google’s TPU ecosystem.

For practitioners looking for the best google tpus for running AI models locally within a cloud-based VPC, the v5p is the definitive choice for 2025. It bridges the gap between experimental development and massive-scale production deployment, providing the VRAM and interconnect speed necessary for the next generation of agentic AI.

GLM-4.5Z.ai | 355B(32B active) | SS | 42.9 tok/s | 51.8 GB | |

GLM-4.7Z.ai | 358B(32B active) | SS | 42.3 tok/s | 52.6 GB | |

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 42.9 tok/s | 51.8 GB | |

Qwen3.5-397B-A17BAlibaba | 397B(17B active) | SS | 48.4 tok/s | 46.0 GB | |

| 70B | SS | 48.7 tok/s | 45.7 GB | ||

Qwen 3.5 OmniAlibaba | 397B(17B active) | SS | 49.3 tok/s | 45.2 GB | |

Llama 2 70B ChatMeta | 70B | SS | 51.3 tok/s | 43.4 GB | |

Mixtral 8x22B InstructMistral AI | 141B(39B active) | SS | 51.1 tok/s | 43.6 GB | |

| Ad | |||||

Qwen3-32BAlibaba | 32.8B | SS | 41.3 tok/s | 53.9 GB | |

Gemma 3 27B ITGoogle | 27B | SS | 50.8 tok/s | 43.8 GB | |

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 37.2 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 37.2 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 37.2 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 37.2 tok/s | 59.8 GB | |

Qwen3-235B-A22BAlibaba | 235B(22B active) | SS | 61.3 tok/s | 36.3 GB | |

Mistral Small 3 24BMistral AI | 24B | SS | 57.1 tok/s | 39.0 GB | |

| Ad | |||||

LLaMA 65BMeta | 65B | SS | 56.7 tok/s | 39.3 GB | |

Qwen3.5-122B-A10BAlibaba | 122B(10B active) | SS | 81.6 tok/s | 27.3 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 33.6 tok/s | 66.3 GB | |

minimax-m2.5MiniMax | 230B(10B active) | SS | 98.1 tok/s | 22.7 GB | |

GLM-4.6Z.ai | 355B(32B active) | SS | 31.7 tok/s | 70.3 GB | |

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | SS | 195.9 tok/s | 11.4 GB | |

Qwen3.6-27BAlibaba | 27B | SS | 30.6 tok/s | 72.8 GB | |

Qwen3.5-27BAlibaba | 27B | SS | 30.6 tok/s | 72.8 GB | |

| Ad | |||||

Falcon 40B InstructTechnology Innovation Institute | 40B | SS | 91.4 tok/s | 24.4 GB | |