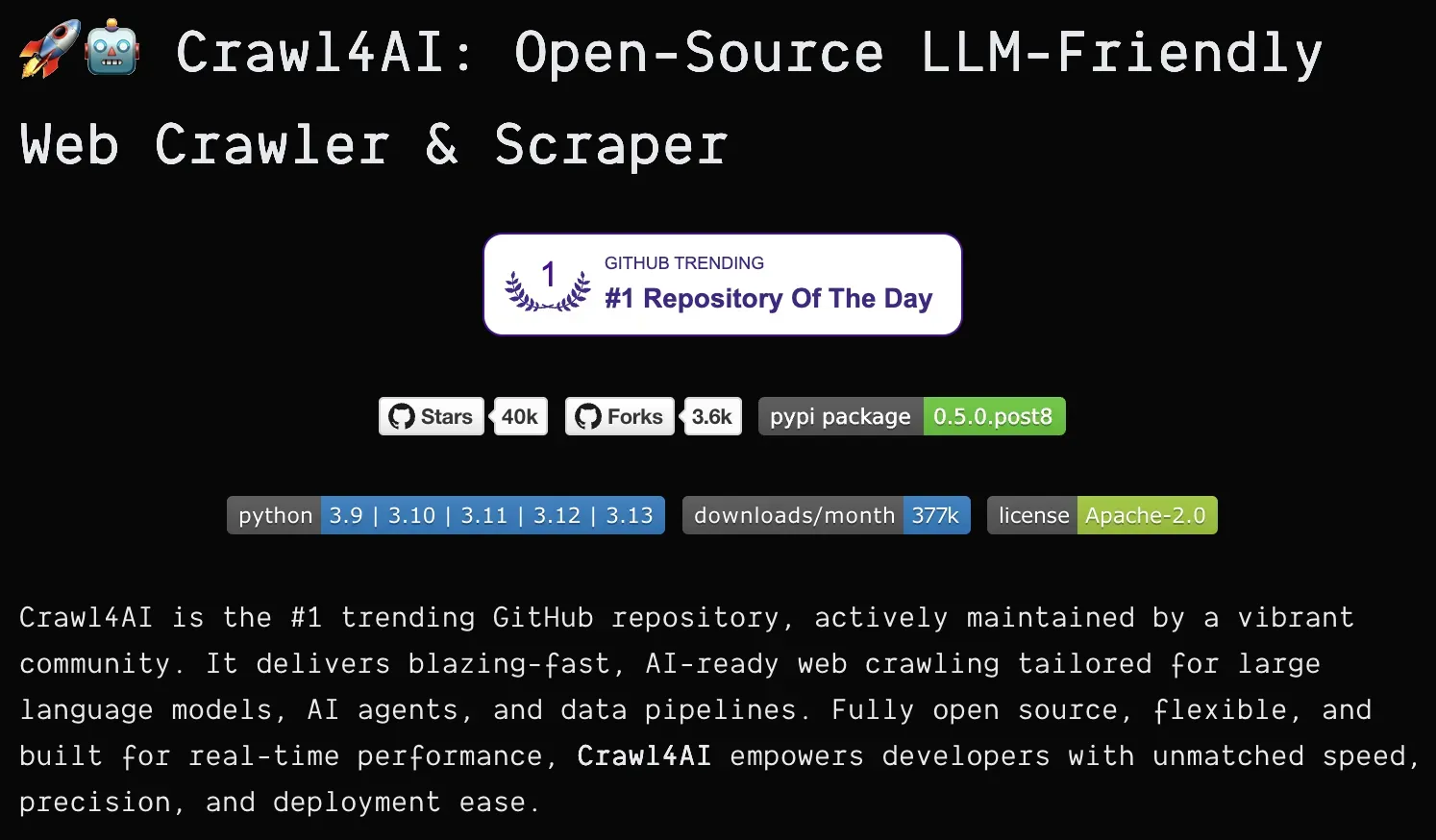

Open-source LLM-friendly web crawler and scraper for extracting structured data from websites with AI-optimized outputs.

Docker

On Premise, Cloud

Intermediate

Crawl4AI is a powerful Python library for web data extraction built specifically to work with Large Language Models. It transforms web content into structured data formats that are ideal for AI processing. The tool respects website crawling rules and offers various crawling strategies from simple page extraction to complex graph-based website traversal. As an open-source project with over 40,000 GitHub stars, it represents a community-driven approach to ethical web data acquisition.

Formats extracted data specifically for optimal processing by large language models.

Uses various algorithms including graph search to efficiently navigate website structures.

Automatically respects website crawling rules to ensure ethical data collection.

Pulls specific elements from web pages based on custom schemas or natural language queries.

Supports various data export formats for integration with different systems.

Follows standard Python versioning with clear development stages from alpha to stable releases.

Gather structured web data to train or fine-tune large language models with real-world information.

Build news aggregators, price comparison tools, or research platforms that compile information from multiple sources.

Extract competitive intelligence, pricing data, or product information from industry websites.

Collect and analyze online content for scientific studies and publications.

Gather data about websites for search engine optimization purposes.

Install using pip: pip install -U crawl4ai

Import the library in your Python code

Configure crawling parameters and target URLs

Define extraction schema if needed

Execute crawl operations

Process and use the extracted data

AI-powered web scraping tool using natural language queries instead of XPath/DOM selectors for reliable data extraction from any website.

Apify is a web scraping platform that extracts data from websites and automates web tasks using ready-made or custom scrapers.

A Node.js and Python library for reliable web scraping and browser automation supporting HTTP requests, Puppeteer, and Playwright with built-in scaling.

Framework for orchestrating collaborative AI agents that work together to solve complex tasks through role-based specialization and teamwork.