Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

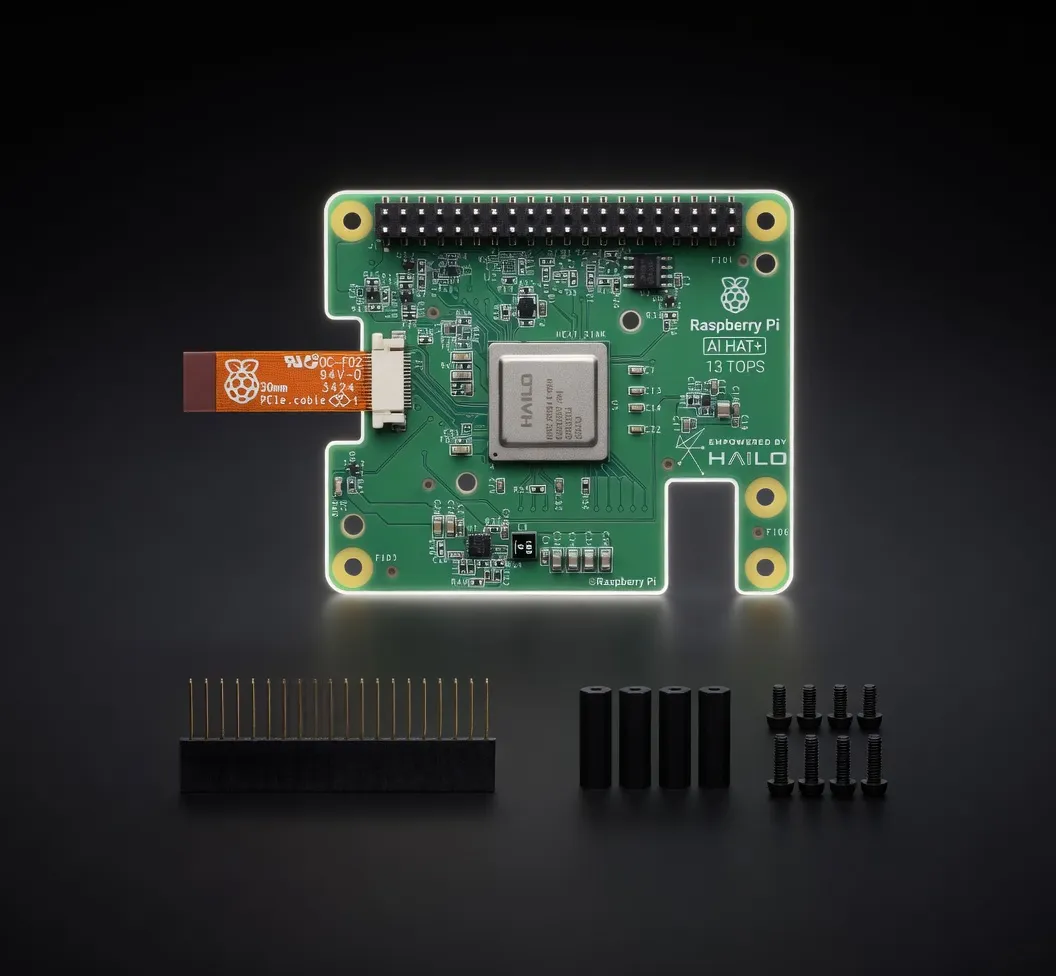

Entry-level 13 TOPS edge AI accelerator in M.2 form factor. Compatible with Raspberry Pi 5 via AI HAT+ for affordable neural network inference at the edge.

The Hailo-8L M.2 AI Accelerator Module is an entry-level, high-efficiency neural processing unit (NPU) designed specifically for edge inference. Developed by Hailo, this module brings the company's proprietary Structure-Defined Dataflow Architecture to a sub-$50 price point. While the flagship Hailo-8 delivers 26 TOPS, the Hailo-8L is a streamlined alternative providing 13 TOPS of INT8 performance, specifically optimized for developers who need to move AI workloads off a host CPU without the thermal or financial overhead of a discrete GPU.

In the current market, the Hailo-8L sits as a direct competitor to the Coral M.2 Accelerator (TPU) and the integrated NPUs found in modern ARM SoCs. It is the primary hardware component behind the Raspberry Pi 5 AI Kit, making it the de facto standard for affordable, low-latency edge AI development. For engineers building local AI agents or autonomous workflows, the Hailo-8L serves as a dedicated inference engine that maintains high throughput while consuming less than 2W of power.

The Hailo-8L M.2 AI Accelerator Module AI inference performance is defined by its efficiency in executing neural network graphs. Unlike traditional GPUs that rely on large register files and massive memory bandwidth to move data, the Hailo Dataflow Architecture minimizes data movement by mapping the layers of a neural network directly onto the hardware's physical resources.

When evaluating the Hailo-8L M.2 AI Accelerator Module VRAM for large language models, it is important to note that this is not a device for 70B parameter models. It is an INT8-optimized chip. While it lacks the raw TFLOPS of an NVIDIA Jetson Orin, it significantly outperforms the Google Coral TPU in modern vision transformer (ViT) workloads and complex detection pipelines.

The Hailo-8L is engineered for Edge inference models (lightweight). It excels at computer vision, real-time audio processing, and small-scale language tasks. Because it operates primarily in INT8 quantization, users must use the Hailo Dataflow Compiler to convert models from TensorFlow, PyTorch, or ONNX.

The Hailo-8L is the best AI chip for local deployment where cost-per-unit and power-per-watt are the primary constraints.

With the launch of the Raspberry Pi 5 AI HAT+, the Hailo-8L has become the standard for hobbyists building local chatbots or home automation systems. It allows the Pi to handle complex vision tasks without thermal throttling the main Broadcom SoC.

For those building local AI agents 2025, the Hailo-8L acts as a "sensory processor." In an agentic workflow, the Hailo-8L handles the "eyes and ears" (vision and wake-word detection), while a more powerful local server or cloud instance handles the heavy reasoning (LLM). This split-inference model is the most efficient way to build autonomous workflows.

Engineers deploying hardware for running Edge inference models (lightweight) in industrial settings use the Hailo-8L for predictive maintenance, defect detection on assembly lines, and smart city traffic monitoring. Its low 1.5W TDP allows for fanless enclosures in harsh environments.

When choosing the best edge device for autonomous workflows, the Hailo-8L is often compared against the Google Coral M.2 and the NVIDIA Jetson Orin Nano.

The Hailo-8L M.2 AI Accelerator Module for AI is the definitive choice for practitioners who need a reliable, low-power, and affordable entry point into high-performance edge inference. It bridges the gap between basic CPU-based inference and expensive, power-hungry GPU setups.

Specs not available for scoring. This product is missing VRAM or memory bandwidth data.