Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

26 TOPS edge AI accelerator in an M.2 form factor with fully integrated on-chip memory — no external DRAM required. Industry-leading power efficiency at just 2.5W typical consumption.

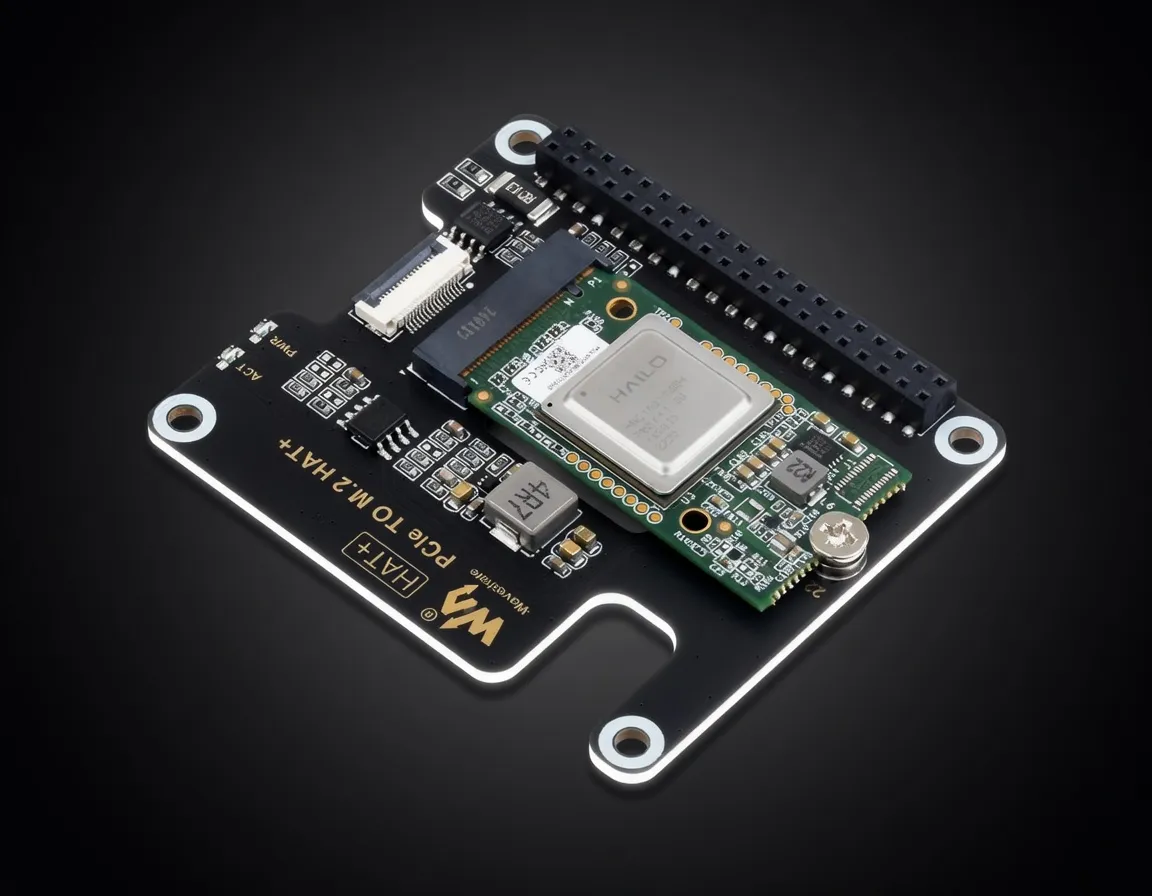

The Hailo-8 M.2 AI Accelerator Module is a high-performance silicon solution designed specifically for edge AI inference. Developed by Hailo, this module brings 26 TOPS (Tera Operations Per Second) of INT8 compute to a standard M.2 form factor, allowing developers to upgrade existing industrial PCs, gateways, and embedded systems into powerful AI edge nodes. Unlike general-purpose GPUs or NPUs that rely on traditional von Neumann architectures, the Hailo-8 utilizes a proprietary Dataflow Architecture that minimizes data movement, significantly reducing latency and power consumption.

Positioned as a professional-grade edge device, the Hailo-8 competes directly with the NVIDIA Jetson Orin Nano and the Google Coral TPU. While the Coral is limited to 4 TOPS and the Jetson requires a proprietary carrier board, the Hailo-8 provides a massive performance jump in a plug-and-play M.2 format. For practitioners building local AI agents and autonomous workflows, it represents one of the most cost-effective ways to achieve high-throughput computer vision and sensor fusion without the thermal or power overhead of a discrete GPU.

The Hailo-8 M.2 AI Accelerator Module for AI is defined by its efficiency. Traditional hardware often bottlenecks at the memory interface; however, the Hailo-8 features fully integrated on-chip memory. By eliminating the need for external DRAM, the module bypasses the typical "memory wall" that plagues edge inference, ensuring that the 26 TOPS of compute are fully utilized rather than waiting for data transfers.

When evaluating Hailo-8 M.2 AI Accelerator Module AI inference performance, it is important to note that this is an INT8-optimized machine. While it does not support FP16 or FP32 native compute, the Hailo AI Software Suite includes a robust compiler and quantizer that converts models from TensorFlow, PyTorch, and ONNX into highly optimized INT8 streams with minimal accuracy loss.

The Hailo-8 is primarily designed for computer vision and structured data processing. When considering hardware for running Edge inference models (YOLOv8, ResNet, etc.), the Hailo-8 is a top-tier performer.

The module excels at high-resolution, high-frame-rate processing. For example, it can run YOLOv8s at over 600 FPS or ResNet-50 at approximately 1,000 FPS. This makes it the best edge device for autonomous workflows involving object detection, semantic segmentation, and pose estimation.

A common question for practitioners is the Hailo-8 M.2 AI Accelerator Module VRAM for large language models. Because the device uses on-chip memory rather than expandable VRAM, it is not intended for running 70B parameter models or heavy 12B+ LLMs locally. However, it can handle highly quantized, smaller language models used for edge intent recognition or simple agentic commands.

For practitioners looking for Hailo-8 M.2 AI Accelerator Module tokens per second on LLMs, it is important to manage expectations: this is a vision-first accelerator. If your workflow requires a "local LLM" for complex reasoning, this module should be paired with a host CPU or a secondary NPU, while the Hailo-8 handles the vision and sensor data processing.

The Hailo-8 is built for production-ready environments where reliability and power per watt are the primary KPIs.

Edge Deployment Scenarios:

This is the best hardware for local AI agents 2025 in the context of physical robotics and smart city infrastructure. If you are deploying 500 edge nodes to monitor traffic or run quality control on a manufacturing line, the $99 MSRP and 2.5W power draw make the Hailo-8 unbeatable.

Developers Building AI-Powered Applications:

Engineers can prototype on a standard Linux or Windows machine by simply plugging the module into an open M.2 slot. The Hailo "Model Zoo" provides pre-trained, pre-optimized weights for a variety of tasks, allowing for rapid deployment of sophisticated AI features without deep-diving into quantization mathematics.

Autonomous Systems:

For drones and mobile robots, weight and battery life are critical. The Hailo-8’s ability to process multiple high-resolution camera streams simultaneously while consuming less power than a standard LED bulb makes it a staple for autonomous navigation and obstacle avoidance.

To understand the Hailo-8's value, it must be compared against the two most common alternatives in the edge AI space.

The Google Coral is a hobbyist favorite due to its low cost and ease of use. However, the Coral offers only 4 TOPS. The Hailo-8 provides more than 6x the compute performance for roughly double the price. For production-grade workloads or models like YOLOv8 that require more "headroom," the Hailo-8 is the superior choice for professional practitioners.

The Jetson Orin Nano is a more versatile "System on Module" (SoM) that includes a CPU, GPU, and memory. It supports FP16 and is much better suited for running LLMs like Mistral or Llama 3. However, the Orin Nano is significantly more expensive, requires a dedicated carrier board, and has a higher power draw (up to 15W). If you already have a host processor (like an Intel NUC or an ARM-based gateway) and only need to add AI acceleration for vision, the Hailo-8 is a more efficient and cost-effective "drop-in" upgrade.

While modern laptop CPUs include NPUs, they are often locked behind proprietary drivers or lack the sustained throughput of a dedicated PCIe-based accelerator. The Hailo-8 offers a deterministic performance profile and a dedicated thermal path, making it more reliable for 24/7 industrial inference than a consumer-grade integrated NPU.

For teams searching for Hailo edge devices for AI development, the Hailo-8 M.2 module stands out as the most accessible and powerful entry point into the ecosystem, bridging the gap between low-power microcontrollers and power-hungry desktop GPUs.

Specs not available for scoring. This product is missing VRAM or memory bandwidth data.