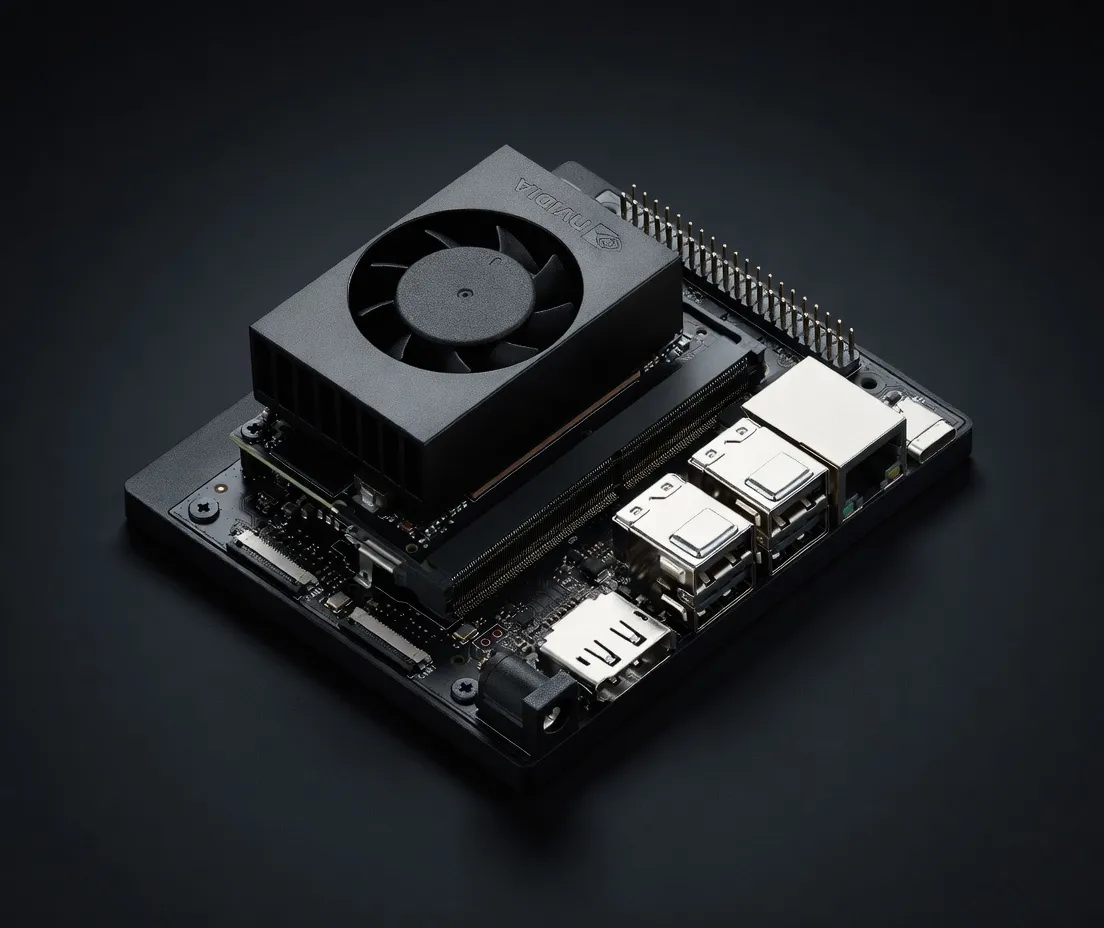

Compact and affordable edge AI dev kit delivering 67 TOPS at just $249. Ideal entry point for developers, students, and makers building edge AI and robotics projects.

The NVIDIA Jetson Orin Nano Super Developer Kit represents the new price-to-performance floor for professional-grade edge AI development. At a $249 MSRP, NVIDIA has positioned this kit as the successor to the original Jetson Nano, offering a massive leap in compute density. While the original Nano struggled with modern transformer architectures, the Orin Nano Super is built specifically to handle the demands of 2025’s agentic workflows and small language models (SLMs).

As part of the NVIDIA edge devices for AI development lineup, this kit bridges the gap between hobbyist microcontrollers and expensive industrial workstations. It competes directly with the Raspberry Pi 5 (8GB) and the Khadas VIM4, but distinguishes itself through the NVIDIA AI stack. For practitioners, the primary value proposition isn't just the raw hardware; it is the native support for TensorRT, CUDA, and the JetPack SDK, which allows for seamless deployment of models developed on high-end RTX or A100/H100 systems.

The hardware architecture of the Orin Nano Super is centered around the Ampere GPU, featuring 1,024 CUDA cores and 32 third-generation Tensor Cores. This configuration delivers 67 INT8 TOPS, a significant figure for a device with a maximum TDP of only 25W.

For local AI inference, VRAM is the primary bottleneck. The Orin Nano Super provides 8GB of LPDDR5 memory. Unlike desktop GPUs, this is unified memory shared between the CPU and GPU, which is critical for minimizing latency in vision-language models (VLMs) and robotics applications. With a memory bandwidth of 102 GB/s, this kit far outpaces the Raspberry Pi 5 (approx. 12-15 GB/s) and even some entry-level x86 laptops, ensuring that token generation and image processing remain fluid.

The device is highly configurable for deployment, with a power range of 7W to 25W. This makes it one of the best edge devices for running AI models locally in environments with strict thermal or power constraints, such as drones, autonomous mobile robots (AMRs), or remote sensor hubs. Practitioners can toggle power profiles via the nvpmodel command to optimize for either maximum throughput or battery longevity.

The NVIDIA Jetson Orin Nano Super Developer Kit VRAM for large language models allows it to comfortably host models in the 1B to 7B parameter range, provided they are appropriately quantized.

Running a 7B at Q2-Q3 parameter model (such as Llama 3.1 8B or Mistral 7B v0.3) is the upper limit for this hardware. At these quantization levels, the model weights occupy roughly 3GB to 5GB of VRAM, leaving enough overhead for the KV cache and the Linux OS.

While LLMs are the current trend, this kit is arguably the best for computer vision at its price point. It can run multiple concurrent streams of YOLOv8 or YOLOv10 for object detection at 30+ FPS. For multimodal tasks, it can handle CLIP-based image encoding and smaller Vision-Language Models (VLMs) like Moondream2, enabling agents that can "see" and reason about their environment in real-time.

This is the best hardware for local AI agents 2025 in the sub-$300 category. Because it supports the full ROS 2 (Robot Operating System) ecosystem and NVIDIA Isaac ROS, it is the standard choice for engineers building autonomous workflows. The dual MIPI CSI-2 camera connectors allow for stereo vision or 360-degree situational awareness, which is essential for edge-based navigation.

For teams building agentic workflows that require privacy, the Orin Nano Super acts as a secure, local inference node. Instead of sending sensitive data to a cloud API, developers can host a local API endpoint (using tools like Ollama or LocalAI) on the Orin Nano Super to handle PII-sensitive summarization or data extraction tasks.

As a budget-friendly entry point, it allows students to learn the NVIDIA ecosystem without the $2,000+ investment required for an AGX Orin. It is a "deploy-ready" dev kit; once a model is optimized on this hardware, it can be ported to the higher-end Jetson Orin NX or AGX Orin modules with minimal code changes.

When evaluating the NVIDIA Jetson Orin Nano Super Developer Kit vs. competitors, the choice usually comes down to the software ecosystem and specialized AI silicon.

For practitioners looking to deploy 7B models at the edge or build sophisticated autonomous agents, the NVIDIA Jetson Orin Nano Super Developer Kit is currently the most cost-effective way to access the CUDA ecosystem in a low-power, high-throughput package.

Qwen3-30B-A3BAlibaba Cloud (Qwen) | 30B(3B active) | AA | 15.2 tok/s | 5.4 GB | |

| 8B | AA | 14.5 tok/s | 5.7 GB | ||

| 9B | BB | 13.7 tok/s | 6.0 GB | ||

Llama 2 7B ChatMeta | 7B | BB | 17.1 tok/s | 4.8 GB | |

Gemma 4 E2B ITGoogle | 2B | BB | 22.1 tok/s | 3.7 GB | |

Mistral 7B InstructMistral AI | 7B | BB | 12.8 tok/s | 6.4 GB | |

Gemma 4 E4B ITGoogle | 4B | BB | 11.9 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | BB | 11.9 tok/s | 6.9 GB | |

Qwen3.6 35B-A3BAlibaba Cloud | 35B(3B active) | DD | 9.6 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba Cloud (Qwen) | 35B(3B active) | DD | 9.6 tok/s | 8.5 GB | |

Llama 2 13B ChatMeta | 13B | DD | 9.7 tok/s | 8.5 GB | |

| 8B | FF | 6.2 tok/s | 13.3 GB | ||

Qwen3.5-9BAlibaba Cloud (Qwen) | 9B | FF | 3.3 tok/s | 24.6 GB | |

Mistral Small 3 24BMistral AI | 24B | FF | 2.1 tok/s | 39.0 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | FF | 7.5 tok/s | 11.0 GB | |

Qwen3.6-27BAlibaba Cloud | 27B | FF | 1.1 tok/s | 72.8 GB | |

Gemma 3 27B ITGoogle | 27B | FF | 1.9 tok/s | 43.8 GB | |

Qwen3.5-27BAlibaba Cloud (Qwen) | 27B | FF | 1.1 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | FF | 1.0 tok/s | 82.0 GB | |

Qwen3-32BAlibaba Cloud (Qwen) | 32.8B | FF | 1.5 tok/s | 53.9 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | FF | 3.4 tok/s | 24.4 GB | |

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | FF | 7.2 tok/s | 11.4 GB | |

LLaMA 65BMeta | 65B | FF | 2.1 tok/s | 39.3 GB | |

Llama 2 70B ChatMeta | 70B | FF | 1.9 tok/s | 43.4 GB | |

| 70B | FF | 1.8 tok/s | 45.7 GB |