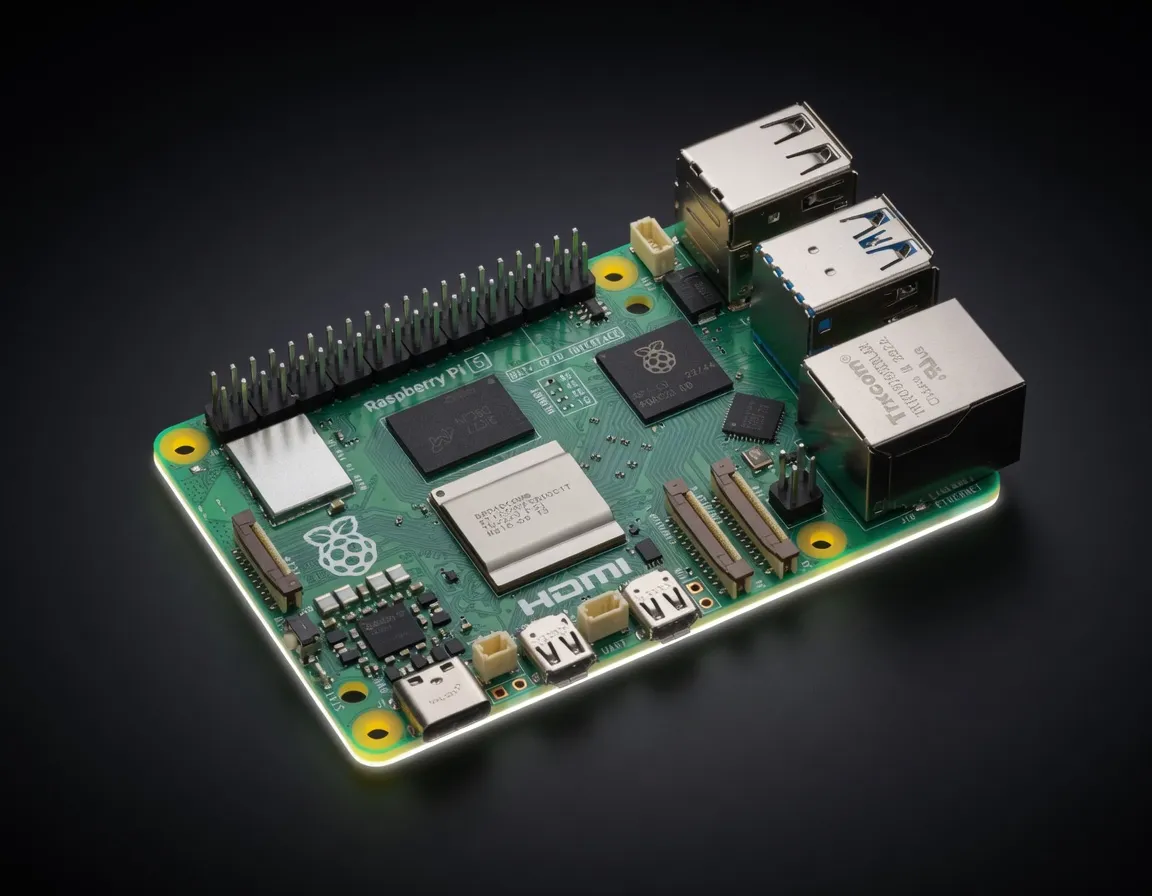

The latest Raspberry Pi single-board computer with a 2.4 GHz quad-core Arm Cortex-A76 CPU. Can run basic AI inference when paired with the Raspberry Pi AI HAT+ and Hailo-8L accelerator.

The Raspberry Pi 5 (8GB) represents a significant shift for the Raspberry Pi Foundation, moving from a general-purpose hobbyist board to a viable entry-point for edge AI development. While previous iterations struggled with the compute demands of modern neural networks, the Pi 5’s Broadcom BCM2712 silicon and 8GB LPDDR4X-4267 memory provide the necessary overhead for running quantized Small Language Models (SLMs) and computer vision tasks at the edge.

For engineers building autonomous workflows, the Pi 5 (8GB) serves as a low-power gateway for local inference. It occupies a specific niche: more capable than a microcontroller but significantly more power-efficient and cost-effective than an entry-level NVIDIA Jetson or a dedicated x86 NPU build. When evaluating the Raspberry Pi 5 (8GB) for AI, the primary value proposition is its ecosystem and the ability to offload specific compute tasks to the optional Raspberry Pi AI HAT+, which integrates Hailo-8L or Hailo-8 accelerators.

The hardware signature of the Raspberry Pi 5 (8GB) is defined by its 34 GB/s memory bandwidth and its 12W TDP. For AI practitioners, memory bandwidth is the primary bottleneck for token generation in LLMs. At 34 GB/s, the Pi 5 offers a substantial leap over the Pi 4, though it remains well below the throughput of dedicated AI workstations.

On its own, the Quad-core Cortex-A76 CPU handles INT8 operations reasonably well for lightweight tasks, but for production-grade edge AI, the AI HAT+ is required.

The 13 TOPS provided by the standard AI HAT+ puts the Raspberry Pi 5 (8GB) AI inference performance in direct competition with the NVIDIA Jetson Orin Nano (lower tier). While it lacks the CUDA ecosystem, the Hailo integration provides a high-efficiency alternative for vision transformers and object detection models without the thermal overhead of a dedicated GPU.

When considering the Raspberry Pi 5 (8GB) local LLM capabilities, practitioners must focus on Small models only (1-3B quantized). Attempting to run 7B or 8B models (like Llama 3.1 8B) is technically possible with heavy 4-bit quantization, but the tokens per second (t/s) often fall below the threshold of usability for real-time agentic workflows.

With the AI HAT+, the Pi 5 excels at real-time vision. It can handle:

For those looking at 8GB VRAM for large language models, it is important to remember that the Pi 5 does not have a discrete GPU. All 8GB is shared. After OS overhead, you have roughly 7GB available for the model weights and KV cache. This strictly limits you to models under 5 billion parameters if you want to avoid heavy swapping to the microSD or NVMe storage.

The Raspberry Pi 5 (8GB) is not a training platform; it is a deployment target. It is one of the best edge devices for running AI models locally when power constraints and physical footprint are the primary concerns.

Engineers building autonomous robots or smart home hubs use the Pi 5 as a centralized controller. Because it consumes only 12W, it can run on battery power or PoE (Power over Ethernet) for extended periods, making it ideal for remote sensing and real-time data processing where an x86 server is impractical.

For developers building agentic workflows, the Pi 5 functions as a "worker node." It can handle task routing, local embedding generation, or acting as a gateway for more complex models running in the cloud. It is the best hardware for local AI agents in 2025 for those who need to deploy 50+ units at scale without the $500+ per-unit cost of higher-end modules.

Hobbyists running local chatbots prefer the 8GB model because it allows for larger context windows compared to the 4GB variant. By using llama.cpp or Ollama, users can maintain a fully private, offline interaction layer for home automation.

Choosing the best AI chip for local deployment requires weighing the ecosystem against raw TFLOPS.

The Orange Pi 5 Plus utilizes the Rockchip RK3588, which features a built-in 6 TOPS NPU. While the Orange Pi has a higher native NPU performance than a "naked" Raspberry Pi 5, the Raspberry Pi Foundation edge devices for AI development benefit from a significantly more mature software stack. The availability of the Hailo-8 AI HAT+ gives the Raspberry Pi a higher ceiling (13-26 TOPS) than the RK3588's internal NPU.

The Jetson Orin Nano is the gold standard for edge AI, offering superior performance and the CUDA toolkit. However, at an MSRP of $80 for the Pi 5 (plus ~$70 for the AI HAT+), the Raspberry Pi solution is roughly half the price of a Jetson Orin Nano Developer Kit. If your workflow doesn't explicitly require CUDA, the Pi 5 is the more budget-friendly approach for high-volume deployments.

The Raspberry Pi 5 (8GB) remains the most accessible entry point for practitioners to move AI models out of the cloud and into the physical world. While it won't run a 70B parameter model, its ability to handle 1-3B quantized models and high-speed vision tasks makes it a staple in the 2025 AI hardware toolkit.

Qwen3-30B-A3BAlibaba Cloud (Qwen) | 30B(3B active) | BB | 5.1 tok/s | 5.4 GB | |

| 8B | BB | 4.8 tok/s | 5.7 GB | ||

| 9B | BB | 4.6 tok/s | 6.0 GB | ||

Llama 2 7B ChatMeta | 7B | BB | 5.7 tok/s | 4.8 GB | |

Mistral 7B InstructMistral AI | 7B | BB | 4.3 tok/s | 6.4 GB | |

Gemma 4 E2B ITGoogle | 2B | BB | 7.4 tok/s | 3.7 GB | |

Gemma 4 E4B ITGoogle | 4B | CC | 4.0 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | CC | 4.0 tok/s | 6.9 GB | |

Qwen3.6 35B-A3BAlibaba Cloud | 35B(3B active) | DD | 3.2 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba Cloud (Qwen) | 35B(3B active) | DD | 3.2 tok/s | 8.5 GB | |

Llama 2 13B ChatMeta | 13B | DD | 3.2 tok/s | 8.5 GB | |

| 8B | FF | 2.1 tok/s | 13.3 GB | ||

Qwen3.5-9BAlibaba Cloud (Qwen) | 9B | FF | 1.1 tok/s | 24.6 GB | |

Mistral Small 3 24BMistral AI | 24B | FF | 0.7 tok/s | 39.0 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | FF | 2.5 tok/s | 11.0 GB | |

Qwen3.6-27BAlibaba Cloud | 27B | FF | 0.4 tok/s | 72.8 GB | |

Gemma 3 27B ITGoogle | 27B | FF | 0.6 tok/s | 43.8 GB | |

Qwen3.5-27BAlibaba Cloud (Qwen) | 27B | FF | 0.4 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | FF | 0.3 tok/s | 82.0 GB | |

Qwen3-32BAlibaba Cloud (Qwen) | 32.8B | FF | 0.5 tok/s | 53.9 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | FF | 1.1 tok/s | 24.4 GB | |

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | FF | 2.4 tok/s | 11.4 GB | |

LLaMA 65BMeta | 65B | FF | 0.7 tok/s | 39.3 GB | |

Llama 2 70B ChatMeta | 70B | FF | 0.6 tok/s | 43.4 GB | |

| 70B | FF | 0.6 tok/s | 45.7 GB |