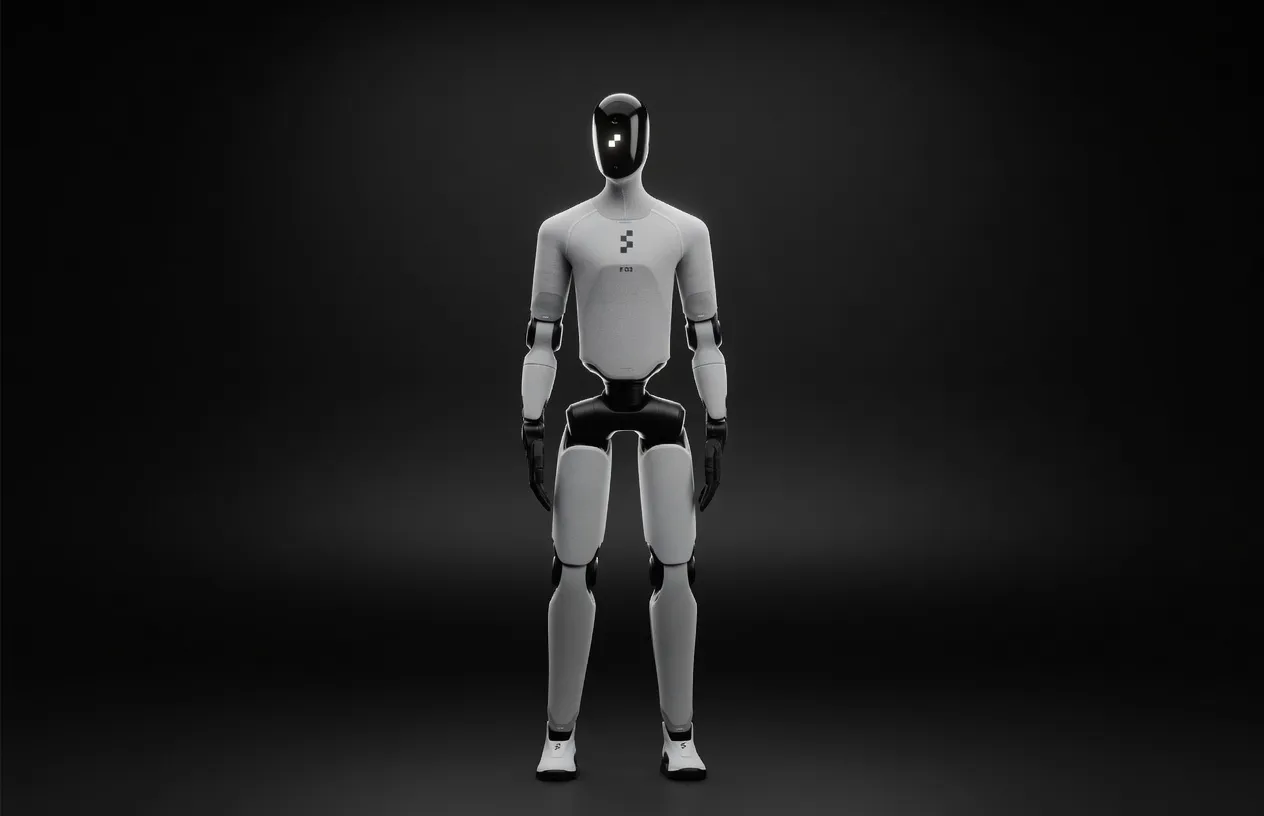

Figure AI's third-generation general-purpose humanoid robot, designed for home and commercial environments. Standing 5'8" at 61 kg with 30 DOF, it features Helix VLA AI, wireless inductive charging, soft textile coverings, and 3-gram tactile sensitivity fingertips. Named TIME Best Invention of 2025.

Figure 03 is the first general-purpose humanoid that ships with an embedded GPU pair and a production-grade VLA (vision-language-action) stack you can actually run locally. At 61 kg and 5'8" it is lighter than a Tesla Optimus prototype and carries the same 20 kg payload, but the real news for AI engineers is the on-board compute: dual embedded GPUs (NVIDIA Jetson-class) that give you 64 GB unified VRAM and a 400 GB/s memory fabric—enough to load a 70B-parameter dense model in 4-bit without offloading. Figure AI’s own Helix model is pre-flashed, but the robot boots into Ubuntu 22.04 and exposes the GPU block as standard CUDA devices, so you can swap in any transformer you want. That makes Figure 03 the only humanoid today that doubles as an edge inference box you can literally walk around your lab.

The target segment is prosumer: priced around $20 k (late-2026 consumer ship), it sits between the $16 k Unitree G1 developer kit and the $25 k Tesla Optimus reservation. If you need a mobile manipulator that can also serve 30–40 tokens/s from a quantized Llama 3.1 70B, this is the only hardware that checks both boxes without a server rack.

Compute

Memory & Model Fit

Power & Thermals

Against stationary edge boxes:

Figure 03 gives you 70 % of the Orin’s throughput while walking, carrying, and streaming 6× 60 fps camera feeds.

Sweet-spot quantization on 64 GB VRAM:

| Model | Size (GB) | Quant | Tokens/s (batch=1) | Notes |

|-------|-----------|-------|--------------------|-------|

| Llama 3.1 70B | 35 | 4-bit AWQ | 38 | 2048 ctx, 8 k KV-cache |

| DeepSeek-R1 70B | 35 | 4-bit GPTQ | 34 | reasoning traces at 28 W |

| Qwen2-VL 7B | 8 | 16-bit | 120 | vision + text, 224×224 im |

| Mixtral 8×7B | 45 | 3-bit | 52 | MoE, 8 k ctx |

| Llama 3.2 3B | 3 | 16-bit | 210 | real-time voice pipeline |

Multimodal: Helix VLA is already loaded; you can co-host a Qwen2-VL or LLaVA-1.6 alongside it—CUDA contexts are isolated via MIG slices.

Long-context: with 64 GB you can push 128 k tokens on Llama 3.1 8B (16-bit) while still walking; beyond that swap to disk over 10 Gbps mmWave offload to a rack server if needed.

Training vs. inference: Figure 03 is inference-first. Fine-tune small adapters (LoRA) on-device, but full-weight 70B training still needs a data-center cluster.

1X NEO Beta ($20 k, shipping now)

Pick NEO if you need a soft-skinned robot today and your AI runs off-board; pick Figure 03 if you want the GPU inside the chassis and 70B local.

Tesla Optimus Gen-2 (est. $25 k, 2027)

Optimus will win on raw TFLOPS once Tesla opens the stack; until then Figure 03 is the only humanoid you can SSH into and run ollama run llama70b out of the box.

Bottom line: if your evaluation metric is “tokens per second per kilogram while walking,” Figure 03 is the best hardware announced so far for running large language models locally on a humanoid robot.

Specs not available for scoring. This product is missing VRAM or memory bandwidth data.