AI code has security flaws in 45% of tests. Learn to scan and fix vibe coded apps with Snyk, Semgrep, Nuclei, and AI hacking agents.

Developing and Securing AI Apps with Local Models and Vibe-Coding

AI is pumping out code at an insane rate right now. Non-developers are building and shipping entire web apps in minutes. And most of that code has never been reviewed by a single human being.

Here's the problem: according to Veracode, AI-generated code introduced risky security flaws in 45% of tests 1. Almost half. And that's not even counting the vulnerabilities that sneak in through external packages, plugins, and the fact that vibe coders rarely maintain anything or follow real dev practices.

So I ran an experiment. I vibe coded a full web app with Claude Code — no manual code review, no technical knowledge assumed — deployed it to the internet, and then threw every security tool I could find at it. Static scanners, AI security reviews, vulnerability scanners, even AI hacking agents.

Here's exactly what I found, what each tool caught, and what they all missed.

Vibe coding is a term coined by Andrej Karpathy — former Tesla AI director and OpenAI co-founder — to describe the practice of building software purely through AI prompts 2. You describe what you want, the AI writes the code, and you accept it without reviewing or even understanding what it produced.

It's powerful. You can spin up a fully functional app in minutes. But the code that comes out the other side often has serious security holes that you'd never know about unless you specifically look for them.

The typical vibe coder sets auto-accept on their AI coding tool and just lets it run. If you're not a developer, you're probably not understanding what's happening with each command — what it's installing, what permissions it's granting, what shortcuts it's taking. And that's exactly where things go wrong.

Short answer: not very. Research consistently shows that AI coding tools produce vulnerable code at alarming rates. Stanford researchers found that GitHub Copilot generated insecure code in roughly 40% of security-relevant scenarios, particularly around cryptographic operations and SQL queries 3. Snyk's 2025 Developer Security Report found that 67% of developers using AI coding assistants rarely or never reviewed the generated code for security issues 4.

And here's what makes vibe coding specifically dangerous compared to regular AI-assisted development: there's zero human review in the loop. A developer using Copilot might catch an obvious SQL injection. A vibe coder won't — because they're not reading the code at all.

The vulnerabilities aren't just in the code AI writes directly. They also come from:

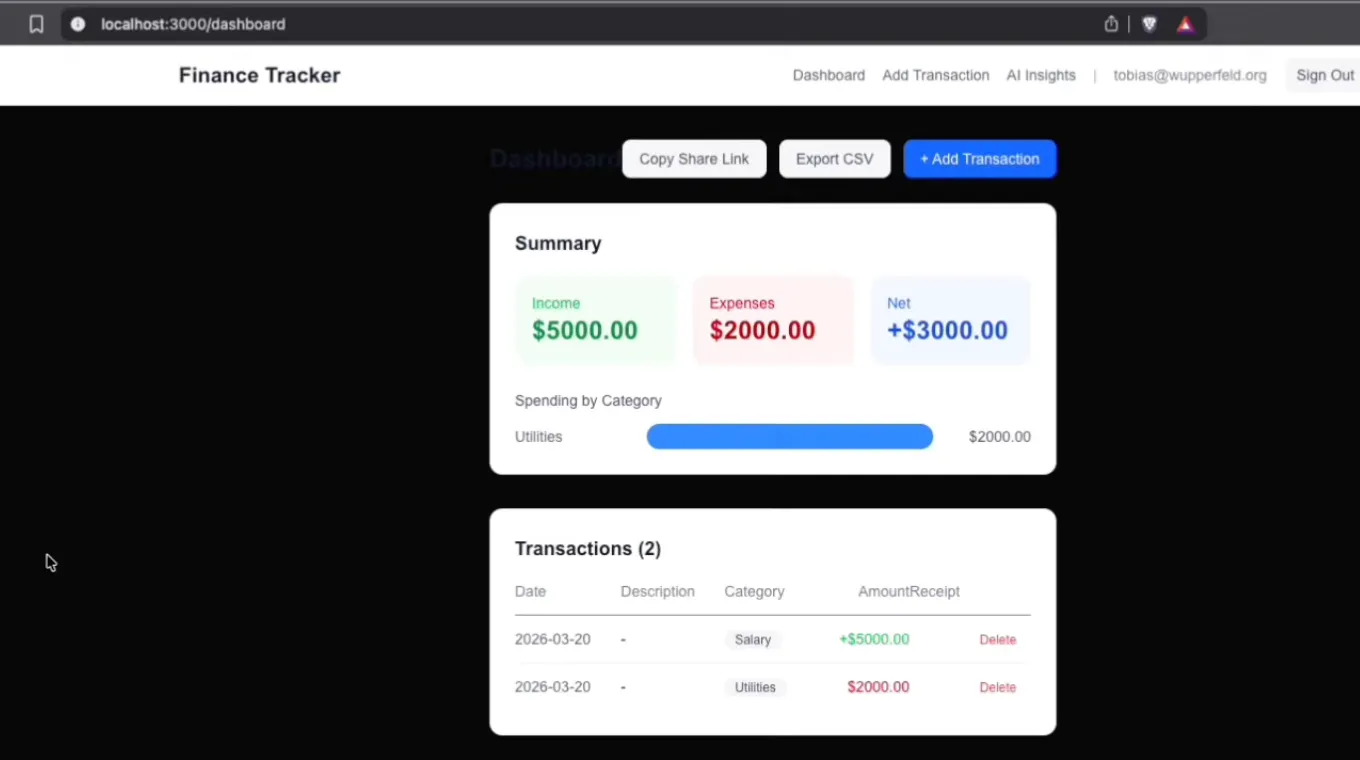

To test this properly, I built a personal finance tracker from scratch using Claude Code within Claude Desktop. No terminal usage, no technical knowledge — just a plain English prompt:

_Build a personal finance tracker with AI insights. Login/signup, add transactions, upload receipts, AI spending summary, share dashboard, admin page with stats, and CSV export. Keep it simple and quick._

Claude came back with a plan. SQLite database, Next.js, NextAuth — didn't even ask me follow-up questions. I approved the plan, set auto-accept on edits, and let it build the entire thing. Within minutes, I had a working app with login, transactions, file uploads, AI-powered spending insights (using DeepSeek via OpenRouter), and a shareable dashboard.

Then I deployed it to Vercel. One prompt. Done. Live on the internet.

Now the real question: how secure is this thing?

Finance tracker dashboard

The first layer of defense is static analysis — tools that scan your code and dependencies without actually running the app.

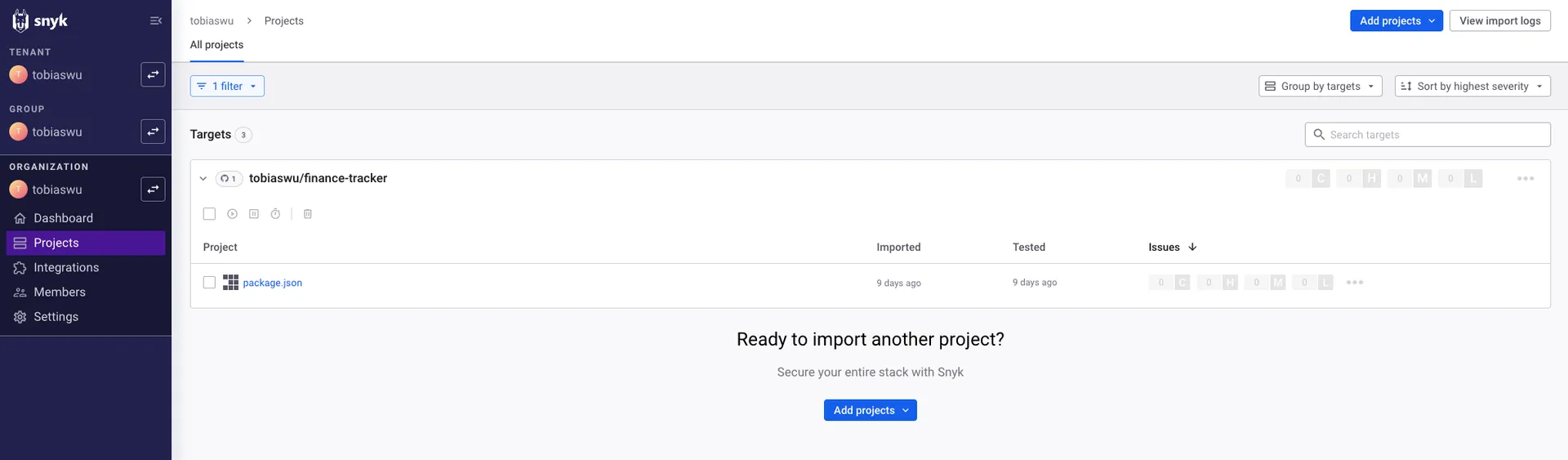

Snyk is one of the most popular options. It has a massive database of known vulnerabilities and scans your dependencies and packages. You connect it to your GitHub repo and it starts scanning automatically. They have a free plan, so there's really no excuse not to use it.

Snyk dasboard showing 0 results for finance tracker app

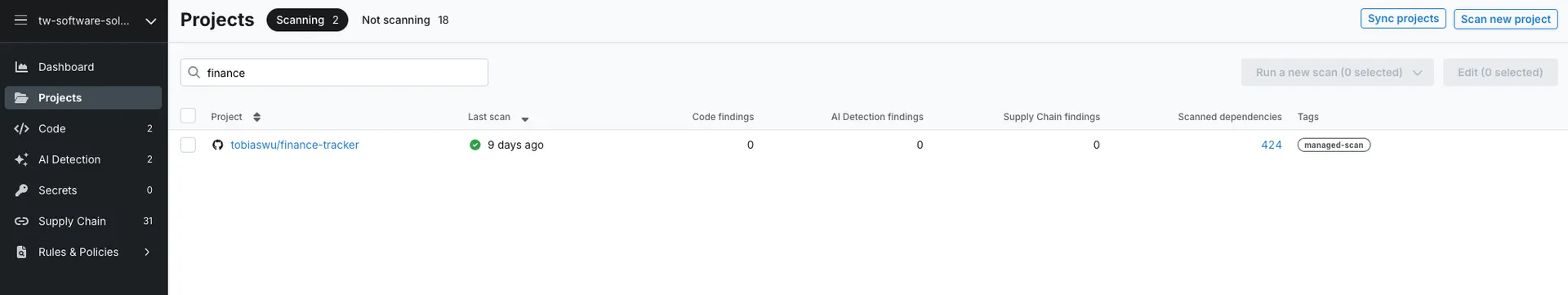

Semgrep takes a different approach. It's open source, uses rule-based static analysis that catches custom patterns, and can run in your CI pipeline. It also scans pull requests automatically and periodically checks for security issues.

Semgrep dashboard showing 0 results for finance tracker app

What they found: basically nothing. Because I just created the app, all the packages were on their latest versions. No known vulnerabilities yet. But here's the important nuance — _give it a few months_. Dependencies get outdated fast. Known vulnerabilities get disclosed. And if you're not actively updating (which most vibe coders aren't), both tools will start lighting up with issues.

In my other, older projects, Snyk flagged several issues simply because I hadn't updated dependencies in a while. That's the thing most developers also skip — and vibe coders almost never do.

Claude Code has a built-in /security-review command that instructs it to scan your codebase for security flaws. You can also just ask it directly: _"Scan this entire app for security flaws."_

This is where things got interesting. Claude found what the static scanners missed:

Claude immediately came up with a plan to fix all of these. You can approve the plan and let it harden the app for you. One prompt, and you're significantly more secure.

Nuclei is a fast, customizable vulnerability scanner powered by thousands of community-built YAML templates 5. It's open source, free, and currently has over 9,000 detection templates covering everything from missing security headers to known CVEs.

To set it up, install it via Homebrew (brew install nuclei), update the templates (nuclei update-templates), and point it at your deployed app:

1nuclei -u https://your-app.vercel.app

Nuclei found 18 issues, including multiple missing security headers — things like Content-Security-Policy, X-Frame-Options, and Strict-Transport-Security headers that prevent common attacks like clickjacking and XSS.

The fix is straightforward: copy the Nuclei output, paste it into Claude Code, and say _"Help me fix these."_ It'll add the right headers and configurations.

This is the most fascinating part. Strix is an open-source tool that deploys AI agents to actually _attack_ your application 6. These agents have browser access, terminal access, and specialized security tools like Nuclei built in. They try to hack your app the way a real attacker would.

You need Docker running, an LLM API key (I used DeepSeek via OpenRouter to keep costs down), and your target URL:

1strix target https://your-app.vercel.app

The root agent spawns sub-agents with different specializations — one explores the login page and tests for SQL injection, another analyzes JWT tokens and session management, another tries to bypass authentication entirely.

Watching it work is genuinely impressive. It's testing SQL injection on login forms, analyzing session cookies, probing for broken authentication, and documenting everything.

The results: Overall risk posture was rated low. The app had decent baseline security thanks to Vercel's security checkpoint, NextAuth's CSRF implementation, and secure cookie configurations. But Strix still recommended session token rotation, security header improvements (echoing Nuclei's findings), MFA implementation, and enhanced logging.

The cost: About $3.50 using DeepSeek, burning through 17 million tokens. Running this with a more expensive model like Claude Opus would cost significantly more. You can set spending limits on your API key to control this.

Pro tip: Run Strix with the -n flag for headless mode — it prints real-time findings and the final report directly in the CLI. Also use the -t flag to chain multiple targets together, scanning both your deployed app and your source code.

Here's the breakdown:

But here's the thing none of them caught: anyone could sign up for my personal finance tracker and generate AI insights using my OpenRouter API key, burning through my credits.

That's a business logic flaw. I didn't want a public signup page — I wanted a private app where only I can log in. But the AI built a public registration flow because that's what "login/signup functionality" means in most contexts. No static scanner, no vulnerability scanner, and no AI hacking agent flagged this as a problem because technically, the signup flow works exactly as implemented.

This is the fundamental blind spot of automated security tools: they can tell you if something is _broken_, but they can't tell you if something is _wrong for your specific use case_.

No single tool catches everything. The tools that found nothing (Snyk, Semgrep) will become critical as your dependencies age. The tools that found things (Claude Code, Nuclei) caught different issues from different angles. And Strix tested the running application in ways static analysis never could.

Use all of them. Layer them:

/security-review) — Run after building new features. Catches logic-level issues that pattern matchers miss.The biggest lesson from this experiment is that no automated tool caught the most obvious flaw — an open registration page on a personal app. This is consistent with what security professionals have known for years: business logic vulnerabilities require human judgment.

After running your automated scans, sit down and ask yourself:

If you're not a developer, this is where consulting with an experienced developer or security expert pays for itself. The scans handle the technical vulnerabilities. The human judgment handles the "wait, should this even be possible?" questions.

Vibe coding is powerful. You can build and ship apps incredibly fast. But never ship without running security scans first — especially if you're putting it on the public internet.

Use layered tools: static analysis for dependencies, AI reviews for code logic, vulnerability scanners for configuration, and AI pentesting for dynamic testing. Feed the results back into your AI coding tool and let it fix what it finds. Most of the time, it's one prompt away from being significantly more secure.

But remember: automated tools have limits. They catch technical flaws, not business logic flaws. The thing that makes your app actually dangerous might be something no scanner will ever flag — like letting strangers burn through your API credits.

If your app handles real money, real data, or real users, don't just scan it. Have a human look at it too.

Keep reading

We write about coding agents, multi-agent systems, AI pair programming, and the engineering practices we use with clients. Hands-on lessons from real projects, not high-level theory.

Browse all articlesJoin our newsletter for weekly insights on AI development, coding agents, and automation strategies.