Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

NVIDIA's Hopper-architecture data center GPU with 80GB HBM3. The industry standard for large-scale AI training and inference, powering most of the world's frontier AI models.

Sized for production serving of 70B–200B class models at full or lightly-quantized precision. Overkill for a homelab; right call when the workload pays for itself in token volume. Notably efficient for its compute class — strong perf-per-watt makes it a natural pick for always-on inference.

Generated from this product’s spec sheet. Editor reviews refine it over time.

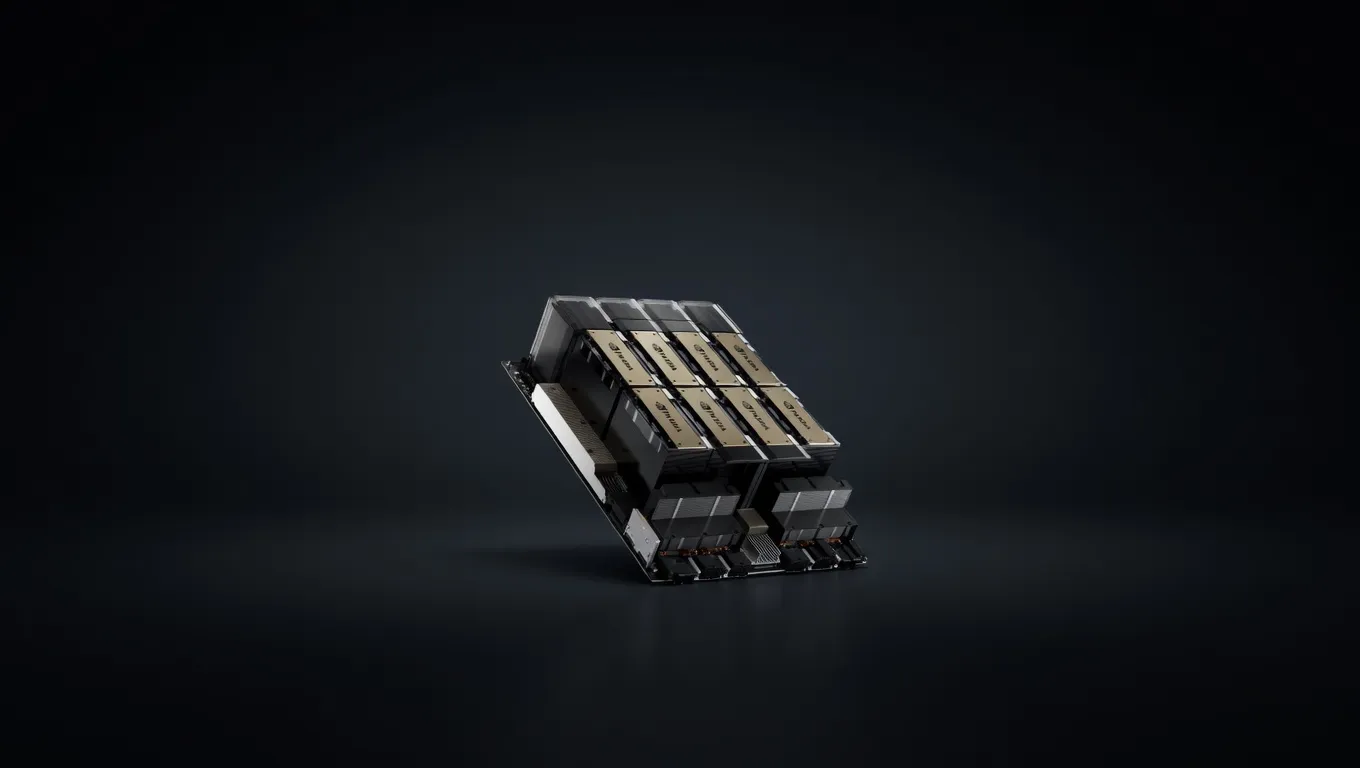

The NVIDIA H100 SXM5 80GB is the definitive silicon for the current era of generative AI. Built on the Hopper (GH100) architecture and manufactured on the TSMC 4N process, this data center GPU is engineered specifically to accelerate the Transformer-based architectures that power modern LLMs. While consumer cards focus on rasterization, the H100 is built for high-throughput tensor calculations and massive memory bandwidth, making it the industry standard for both frontier model training and high-concurrency inference.

Positioned as the flagship of the NVIDIA GPUs lineup, the H100 SXM5 is a high-density compute module designed for integration into HGX boards. It competes primarily with the AMD Instinct MI300X and NVIDIA’s own Blackwell-series successors. For AI engineers and researchers, the H100 SXM5 80GB for AI development represents the most stable, well-supported, and high-performance environment available, benefiting from a decade of CUDA optimization and the introduction of the dedicated Transformer Engine.

The H100 SXM5 is defined by its ability to move data. While its 989.4 TFLOPS of FP16 performance is impressive, the real-world bottleneck for LLM inference is often memory bandwidth. The H100 utilizes 80GB of HBM3 memory, delivering a massive 3350 GB/s of bandwidth. This allows for significantly higher tokens per second compared to the PCIe variant of the H100 or consumer-grade cards like the RTX 4090, which tops out at 1008 GB/s.

Key technical specifications for AI workloads include:

The 700W TDP is a critical consideration for those looking at NVIDIA H100 SXM5 80GB local LLM deployments. Unlike PCIe cards, the SXM5 form factor requires specialized server chassis with robust cooling and power delivery systems. However, this power draw is offset by its efficiency; the H100 can deliver up to 30x the performance of the previous generation A100 in certain Transformer-based inference tasks.

The NVIDIA H100 SXM5 80GB VRAM for large language models allows for a wide range of deployment scenarios, from single-card inference to massive multi-GPU clusters. When evaluating hardware for running 70B at FP16, 405B at Q4 (multi-GPU) parameter models, the H100 is the baseline.

On a single H100 80GB, you can comfortably run:

For the largest frontier models, the 900 GB/s NVLink interconnect is the H100's "killer feature."

The "sweet spot" for this hardware is often FP8 or AWQ 4-bit quantization. Using NVIDIA's TensorRT-LLM, practitioners can leverage the Transformer Engine to achieve FP8 precision, which offers nearly identical accuracy to FP16 but with a 2x boost in NVIDIA H100 SXM5 80GB tokens per second.

The H100 SXM5 is not a "hobbyist" card in the traditional sense; it is a production-grade tool for those building at scale.

When choosing the best NVIDIA GPUs for running AI models locally or in a private cloud, the H100 SXM5 is often compared to the NVIDIA A100 80GB and the AMD Instinct MI300X.

The A100 was the previous king of the data center. While it also has 80GB of VRAM, the H100 offers:

The MI300X is a formidable competitor in the NVIDIA vs AMD for AI inference debate.

For practitioners looking for the best AI GPU for agent training and high-scale inference, the NVIDIA H100 SXM5 80GB remains the benchmark by which all other AI hardware is measured. Its combination of HBM3 bandwidth, Transformer Engine acceleration, and mature software stack makes it the premier choice for 2025's most demanding agentic and LLM workloads.

Llama 2 70B ChatMeta | 70B | SS | 62.1 tok/s | 43.4 GB | |

| 70B | SS | 59.0 tok/s | 45.7 GB | ||

Mixtral 8x22B InstructMistral AI | 141B(39B active) | SS | 61.9 tok/s | 43.6 GB | |

GLM-4.5Z.ai | 355B(32B active) | SS | 52.0 tok/s | 51.8 GB | |

GLM-4.7Z.ai | 358B(32B active) | SS | 51.3 tok/s | 52.6 GB | |

Qwen 3.5 OmniAlibaba | 397B(17B active) | SS | 59.7 tok/s | 45.2 GB | |

Qwen3.5-397B-A17BAlibaba | 397B(17B active) | SS | 58.6 tok/s | 46.0 GB | |

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 45.1 tok/s | 59.8 GB | |

| Ad | |||||

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 45.1 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 45.1 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 45.1 tok/s | 59.8 GB | |

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 52.0 tok/s | 51.8 GB | |

Qwen3-235B-A22BAlibaba | 235B(22B active) | SS | 74.2 tok/s | 36.3 GB | |

Gemma 3 27B ITGoogle | 27B | SS | 61.6 tok/s | 43.8 GB | |

Qwen3-32BAlibaba | 32.8B | SS | 50.0 tok/s | 53.9 GB | |

Mistral Small 3 24BMistral AI | 24B | SS | 69.2 tok/s | 39.0 GB | |

| Ad | |||||

LLaMA 65BMeta | 65B | SS | 68.7 tok/s | 39.3 GB | |

Qwen3.5-122B-A10BAlibaba | 122B(10B active) | SS | 98.9 tok/s | 27.3 GB | |

minimax-m2.5MiniMax | 230B(10B active) | SS | 118.8 tok/s | 22.7 GB | |

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | SS | 237.3 tok/s | 11.4 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 40.7 tok/s | 66.3 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | SS | 110.7 tok/s | 24.4 GB | |

Qwen3.5-9BAlibaba | 9B | SS | 109.6 tok/s | 24.6 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | SS | 244.9 tok/s | 11.0 GB | |

| Ad | |||||

GLM-4.6Z.ai | 355B(32B active) | SS | 38.4 tok/s | 70.3 GB | |