Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

Budget variant of the RTX 5060 Ti with 8GB GDDR7 on a 128-bit bus. Same 4,608 CUDA cores as the 16GB model at a lower $379 price point, though limited VRAM constrains higher resolutions.

8 GB will run a 7B Q4 quant and most embedding models, but the KV cache budget is tight. Better as a stepping stone than a long-term home for AI work.

Generated from this product’s spec sheet. Editor reviews refine it over time.

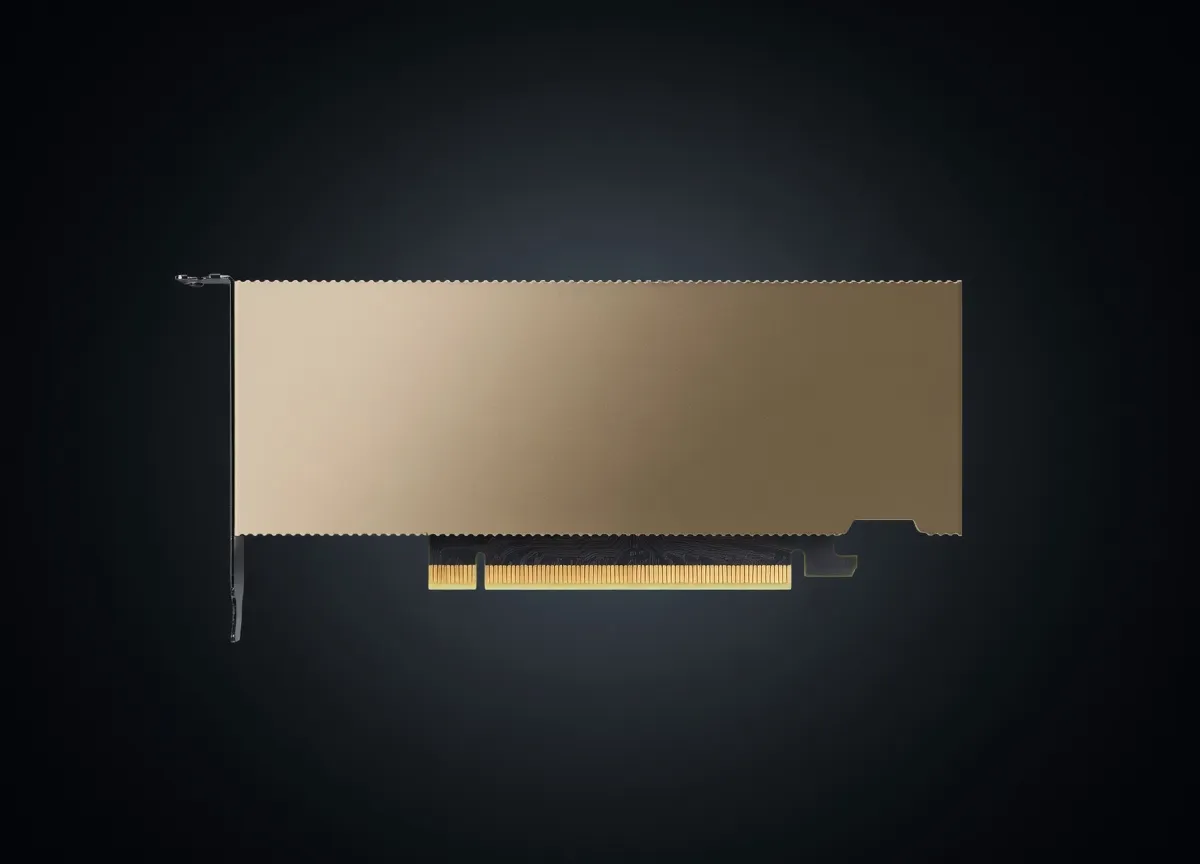

The NVIDIA GeForce RTX 5060 Ti 8GB represents the entry point for NVIDIA’s Blackwell architecture, designed for developers and hobbyists who prioritize architecture-level efficiency and modern feature sets over raw VRAM capacity. Priced at an MSRP of $379, it sits firmly in the budget-friendly category of the 50-series lineup. While it shares the same 4,608 CUDA core count as its 16GB sibling, this variant is specifically aimed at users running smaller, highly quantized models or those integrating AI capabilities into standard software development workflows.

In the landscape of best NVIDIA GPUs for running AI models locally, the RTX 5060 Ti 8GB competes primarily with the previous generation RTX 4060 Ti and AMD’s mid-range Radeon RX series. However, the shift to the Blackwell GB206 silicon and GDDR7 memory provides a distinct advantage in memory bandwidth and architectural throughput. For practitioners building agentic workflows or local inference pipelines, this card serves as a low-power, high-efficiency node for specialized tasks rather than a general-purpose heavyweight for large-scale LLMs.

When evaluating the NVIDIA GeForce RTX 5060 Ti 8GB for AI, the primary bottleneck is the 8GB VRAM capacity on a 128-bit bus. However, NVIDIA has mitigated some of the traditional mid-range bandwidth constraints by moving to GDDR7 memory, which pushes the memory bandwidth to 448 GB/s. For AI inference, memory bandwidth is often the primary determinant of tokens per second (t/s), as the weights must be moved from VRAM to the compute cores for every token generated.

The NVIDIA GeForce RTX 5060 Ti 8GB AI inference performance is characterized by high throughput on small models. The Blackwell architecture introduces improved 4th Gen Tensor Cores, which are optimized for lower-precision formats like FP8 and potentially INT4, which are increasingly relevant for local deployment. With a TDP of only 180W, this card is exceptionally efficient, making it a viable candidate for edge deployment or compact workstations where power and thermal constraints are a priority. When compared to the AMD RX 7700 XT, the 5060 Ti 8GB generally leads in software compatibility due to the maturity of the CUDA ecosystem and the widespread support for TensorRT.

The "8GB GPU for AI" category requires careful management of quantization to be effective. For the NVIDIA GeForce RTX 5060 Ti 8GB local LLM experience, users must look toward 7B to 8B parameter models. This hardware is optimized for running 7B at Q2-Q3 parameter models if you intend to leave room for KV cache and system overhead.

The sweet spot for this hardware is 4-bit quantization (Q4_0 or Q4_K_M) for 7B/8B models. While Q2 or Q3 allows for larger context windows, the perplexity loss is often too high for professional use. For multimodal models like Moondream2 or Llava-v1.5-7B, the 5060 Ti 8GB handles inference capably, provided the vision encoder and LLM weights are quantized appropriately.

The RTX 5060 Ti 8GB is not a "one size fits all" solution for AI development, but it excels in specific niches:

For those looking for the best hardware for local AI agents 2025 on a budget, this card provides entry into the NVIDIA ecosystem. It allows for the exploration of RAG (Retrieval-Augmented Generation) using small local vector databases and 8B parameter models without the high cost of a 90-series card.

Developers building applications that will eventually be deployed on edge devices or consumer-grade hardware need a representative testing environment. The 5060 Ti 8GB is an ideal "baseline" target. If an agentic workflow runs smoothly on this card, it will likely run on the majority of the modern installed base of discrete GPUs.

Teams running specialized inference servers for tasks like sentiment analysis, NER (Named Entity Recognition), or small-scale embedding generation will find the 180W TDP attractive. It allows for high-density rack configurations where power draw and heat dissipation are critical factors.

It is important to note that this is not the best AI GPU for agent training. With only 8GB of VRAM, fine-tuning even a 7B model using LoRA or QLoRA is extremely tight and often requires offloading to system RAM, which kills performance. This card is strictly an inference-first tool.

Choosing the right NVIDIA nvidia gpus for AI development requires weighing VRAM against compute speed.

The NVIDIA GeForce RTX 5060 Ti 8GB is a specialized tool. It is a high-speed, low-capacity inference engine. For practitioners who understand the constraints of 8GB VRAM for large language models and are working within the 7B-8B parameter space, it offers a modern, efficient, and cost-effective entry point into the Blackwell ecosystem.

Qwen3-30B-A3BAlibaba | 30B(3B active) | SS | 67.0 tok/s | 5.4 GB | |

| 8B | SS | 63.7 tok/s | 5.7 GB | ||

| 9B | SS | 60.0 tok/s | 6.0 GB | ||

PersonaPlex 7BNVIDIA | 7B | SS | 75.3 tok/s | 4.8 GB | |

Llama 2 7B ChatMeta | 7B | SS | 75.3 tok/s | 4.8 GB | |

Mistral 7B InstructMistral AI | 7B | SS | 56.4 tok/s | 6.4 GB | |

Gemma 4 E2B ITGoogle | 2B | AA | 97.3 tok/s | 3.7 GB | |

Gemma 4 E4B ITGoogle | 4B | AA | 52.1 tok/s | 6.9 GB | |

| Ad | |||||

Gemma 3 4B ITGoogle | 4B | AA | 52.1 tok/s | 6.9 GB | |

Nemotron 3 Nano OmniNVIDIA | 30B(3B active) | BB | 42.3 tok/s | 8.5 GB | |

Qwen3.6 35B-A3BAlibaba | 35B(3B active) | BB | 42.3 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba | 35B(3B active) | BB | 42.3 tok/s | 8.5 GB | |

Llama 2 13B ChatMeta | 13B | BB | 42.6 tok/s | 8.5 GB | |

| 8B | FF | 27.1 tok/s | 13.3 GB | ||

Qwen3.5-9BAlibaba | 9B | FF | 14.7 tok/s | 24.6 GB | |

Mistral Small 3 24BMistral AI | 24B | FF | 9.2 tok/s | 39.0 GB | |

| Ad | |||||

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | FF | 32.7 tok/s | 11.0 GB | |

Carnice-V2-27bkai-os | 27B | FF | 5.0 tok/s | 72.8 GB | |

Qwen3.6-27BAlibaba | 27B | FF | 5.0 tok/s | 72.8 GB | |

Gemma 3 27B ITGoogle | 27B | FF | 8.2 tok/s | 43.8 GB | |

Qwen3.5-27BAlibaba | 27B | FF | 5.0 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | FF | 4.4 tok/s | 82.0 GB | |

Qwen3-32BAlibaba | 32.8B | FF | 6.7 tok/s | 53.9 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | FF | 14.8 tok/s | 24.4 GB | |

| Ad | |||||

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | FF | 31.7 tok/s | 11.4 GB | |