Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

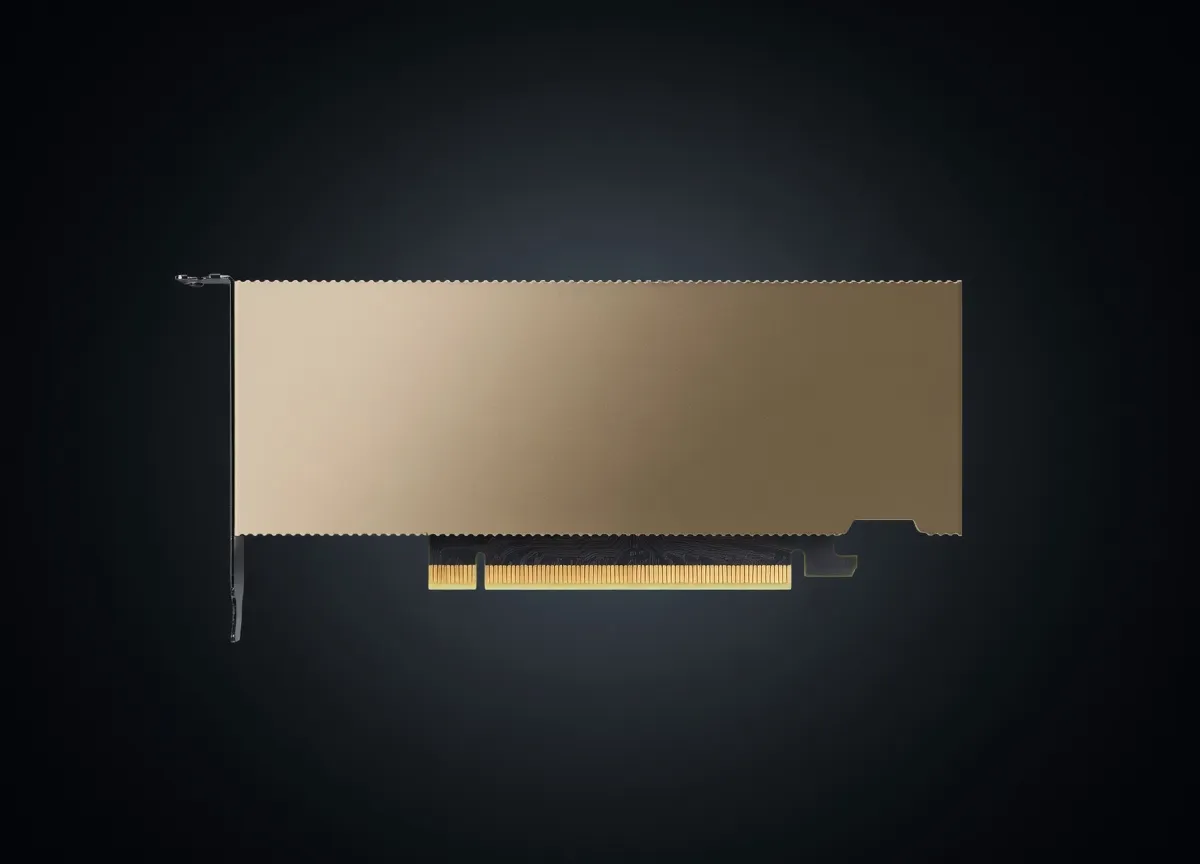

Mid-range Ada Lovelace GPU with 5,888 CUDA cores and 12GB GDDR6X. Strong 1440p performer that represented excellent price/performance in the RTX 40 series lineup.

12 GB is the modern minimum for usable local LLMs. Comfortable with 7B at FP16 or 13B at Q4; anything bigger pushes context windows down to single-digit thousands. Pricing puts it well above average on raw compute-per-dollar, which matters more than peak FLOPS for steady inference loads.

Generated from this product’s spec sheet. Editor reviews refine it over time.

The NVIDIA GeForce RTX 4070, based on the Ada Lovelace architecture, remains a significant entry point for developers and engineers building local AI workflows. While officially discontinued in favor of the "Super" refresh, the base 4070 is widely available on the secondary market and remains a staple for those seeking a balance of power efficiency and tensor throughput. For practitioners focused on NVIDIA GeForce RTX 4070 AI inference performance, this card represents the "efficiency sweet spot," delivering 466 INT8 TOPS and 58.4 TFLOPS of FP16 compute within a modest 200W TDP.

Positioned as a mid-range consumer GPU, the RTX 4070 competes directly with the AMD Radeon RX 7800 XT and the older RTX 3080 10GB. However, for AI development, the 4070's 4th Generation Tensor Cores and access to the CUDA ecosystem make it a more viable choice for local AI agents and computer vision tasks. It is specifically engineered for users who need a reliable 12GB GPU for AI that doesn't require a high-wattage power supply or specialized thermal management.

The technical profile of the RTX 4070 is defined by the AD104 die, utilizing TSMC’s 4N process. For AI workloads, the most critical constraint is the 12GB of GDDR6X VRAM on a 192-bit memory bus. While the memory bandwidth of 504 GB/s is lower than that of the flagship 4090, it is sufficient for high-speed token generation on smaller LLMs and provides enough headroom for complex image generation pipelines like Stable Diffusion XL (SDXL).

With 5,888 CUDA cores and 184 4th Gen Tensor Cores, the RTX 4070 is optimized for FP8 and INT8 precision, which are increasingly the standard for quantized inference.

When considering NVIDIA vs AMD for AI inference, the RTX 4070 wins on software compatibility. While AMD's ROCm is improving, the 4070 provides out-of-the-box support for the entire stack of AI tools, including PyTorch, TensorFlow, vLLM, and TensorRT-LLM.

The primary constraint of the NVIDIA GeForce RTX 4070 VRAM for large language models is the 12GB capacity. This dictates the maximum parameter count and the quantization level you can deploy.

The RTX 4070 is the best hardware for running 7B at Q4 parameter models. At this scale, the entire model and its KV cache fit comfortably within VRAM, allowing for lightning-fast inference.

The RTX 4070 is tagged as Best for Computer Vision for a reason. It excels at:

For developers building local AI agents in 2025, the RTX 4070 serves as an excellent "sandbox" GPU. It provides enough VRAM to run an orchestrator model (like Llama 3 8B) while leaving a small amount of overhead for vector databases or tool-calling frameworks. It is the best AI GPU for agent training if your "training" is limited to Low-Rank Adaptation (LoRA) or prompt engineering.

If you are moving beyond cloud-based APIs and want to run a private chatbot or an automated content pipeline, the 4070 is a budget-friendly entry point. It avoids the "VRAM tax" of the higher-end 3090/4090 while providing modern architectural benefits like DLSS 3 (useful for those who also use the card for rendering or gaming).

Because of its 200W TDP and standard PCIe 4.0 x16 interface, the 4070 is often used in workstation prototypes that will eventually be deployed to more robust edge hardware. It is a reliable NVIDIA GPU for AI development where you need to ensure your code is CUDA-compatible before scaling to H100s or A100s in the cloud.

The RTX 3060 is often cited as the budget king due to its 12GB VRAM. However, the 4070 offers nearly double the TFLOPS and significantly faster GDDR6X memory. If your workload involves frequent inference or small-scale fine-tuning, the 4070's 4th Gen Tensor Cores provide a much higher ceiling for NVIDIA GeForce RTX 4070 for AI tasks compared to the aging Ampere architecture.

The 4060 Ti 16GB offers more VRAM, which allows for larger models (like 14B or 20B parameters) at higher quantization. However, the 4060 Ti is severely bottlenecked by a 128-bit memory bus (288 GB/s). For models that do fit in 12GB, the RTX 4070's 504 GB/s bandwidth makes it significantly faster in terms of tokens per second. Use the 4060 Ti if you absolutely need the capacity; choose the 4070 if you need raw inference speed and compute throughput.

The 4070 Super is the direct successor, offering ~20% more CUDA cores for a similar launch price. However, since the original 4070 is discontinued, it can often be found at a significant discount. If the price delta is more than $100, the base 4070 remains a compelling best AI chip for local deployment on a strict budget, as the VRAM capacity (the primary bottleneck) remains the same at 12GB across both cards.

Qwen3.6 35B-A3BAlibaba | 35B(3B active) | SS | 47.6 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba | 35B(3B active) | SS | 47.6 tok/s | 8.5 GB | |

Qwen3-30B-A3BAlibaba | 30B(3B active) | SS | 75.3 tok/s | 5.4 GB | |

Llama 2 13B ChatMeta | 13B | SS | 47.9 tok/s | 8.5 GB | |

| 9B | SS | 67.5 tok/s | 6.0 GB | ||

| 8B | SS | 71.6 tok/s | 5.7 GB | ||

Gemma 4 E4B ITGoogle | 4B | SS | 58.7 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | SS | 58.7 tok/s | 6.9 GB | |

| Ad | |||||

Mistral 7B InstructMistral AI | 7B | SS | 63.4 tok/s | 6.4 GB | |

Llama 2 7B ChatMeta | 7B | SS | 84.7 tok/s | 4.8 GB | |

Gemma 4 E2B ITGoogle | 2B | AA | 109.4 tok/s | 3.7 GB | |

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | AA | 35.7 tok/s | 11.4 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | AA | 36.8 tok/s | 11.0 GB | |

| 8B | FF | 30.4 tok/s | 13.3 GB | ||

Qwen3.5-9BAlibaba | 9B | FF | 16.5 tok/s | 24.6 GB | |

Mistral Small 3 24BMistral AI | 24B | FF | 10.4 tok/s | 39.0 GB | |

| Ad | |||||

Qwen3.6-27BAlibaba | 27B | FF | 5.6 tok/s | 72.8 GB | |

Gemma 3 27B ITGoogle | 27B | FF | 9.3 tok/s | 43.8 GB | |

Qwen3.5-27BAlibaba | 27B | FF | 5.6 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | FF | 4.9 tok/s | 82.0 GB | |

Qwen3-32BAlibaba | 32.8B | FF | 7.5 tok/s | 53.9 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | FF | 16.7 tok/s | 24.4 GB | |

LLaMA 65BMeta | 65B | FF | 10.3 tok/s | 39.3 GB | |

Llama 2 70B ChatMeta | 70B | FF | 9.3 tok/s | 43.4 GB | |

| Ad | |||||

| 70B | FF | 8.9 tok/s | 45.7 GB | ||