Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

Ultra-efficient Ada Lovelace inference GPU with 24GB GDDR6 in a low-profile 72W form factor. The most power-efficient NVIDIA data center GPU, ideal for dense inference deployments and video processing.

The 24 GB tier is where most local-LLM tooling assumes you live. Strong fit for code agents, RAG, and 30B-class reasoning models without exotic quants. Notably efficient for its compute class — strong perf-per-watt makes it a natural pick for always-on inference.

Generated from this product’s spec sheet. Editor reviews refine it over time.

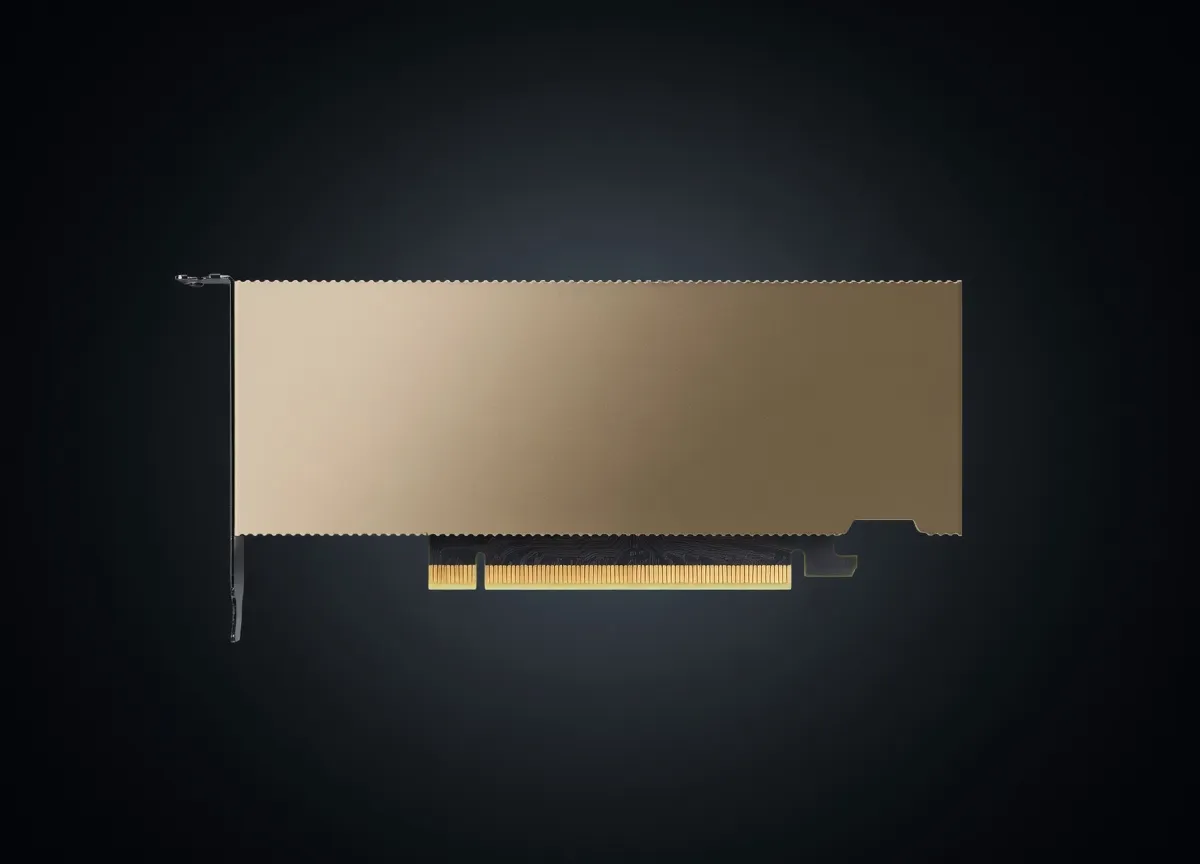

The NVIDIA L4 Tensor Core GPU is the efficiency leader of the Ada Lovelace data center lineup. Designed specifically to replace the aging T4, the L4 is a low-profile, single-slot accelerator that packs 24GB of GDDR6 VRAM into a 72W TDP. While high-end H100s and B200s dominate training headlines, the L4 is the workhorse for dense inference deployments, video processing, and local AI agent orchestration where power constraints and thermal management are primary concerns.

For engineers building agentic workflows or deploying local LLMs, the L4 represents a specialized middle ground between consumer RTX cards and high-end enterprise silicon. It lacks the active cooling of a 4090 but offers enterprise-grade reliability, ECC memory, and vGPU support. It is the definitive choice for high-density server environments and edge deployments where maximizing "performance per watt" is more critical than raw peak TFLOPS.

The NVIDIA L4 Tensor Core GPU for AI is built on the AD104 die (TSMC 4N process), optimized for high-throughput inference rather than heavy training. The standout metric for this card is its 24GB of GDDR6 memory. In the context of 2025 AI workloads, 24GB is the "Goldilocks" zone for local LLMs, allowing practitioners to run high-quality 7B to 14B parameter models entirely in VRAM without the performance degradation of system RAM offloading.

The L4 introduces support for the FP8 (8-bit floating point) data format, which is essential for modern inference engines like vLLM, TensorRT-LLM, and LMDeploy.

While the 300 GB/s memory bandwidth is lower than that of the A10 or the consumer-grade RTX 4090, the L4 compensates with 4th Generation Tensor Cores that handle sparsity and low-precision arithmetic with extreme efficiency. In a production environment, this translates to stable, low-latency performance for real-time applications.

The 72W TDP is the L4’s primary competitive advantage. It requires no external power connectors, drawing all its power directly from the PCIe slot. This makes it the best AI chip for local deployment in existing server chassis or workstations that cannot support the 450W+ requirements of high-end GPUs. Its low-profile, single-slot design allows for maximum density—often fitting 8 or more units in a 2U server.

When evaluating the NVIDIA L4 Tensor Core GPU VRAM for large language models, the 24GB capacity dictates the operational ceiling.

For a standard Llama 3.1 8B model using FP8 precision via TensorRT-LLM, practitioners can expect:

The L4 includes three NVENC and three NVDEC engines with full AV1 support. This makes it the premier choice for AI agents that process video feeds in real-time—such as automated video editing, surveillance analysis, or real-time transcription/translation services.

The L4 is designed for production. If you are a developer building an API-backed application and want to move away from expensive cloud providers like OpenAI or Anthropic, a cluster of L4s provides a predictable, low-latency environment. Its vGPU support allows teams to partition a single L4 into multiple smaller virtual GPUs for lighter workloads, such as embedding models (BERT, BGE-M3).

For those building "Agentic" workflows—where an LLM must call tools, search the web, and execute code—the L4 provides the stability needed for 24/7 operation. Its low power draw means it can run in a home lab or office closet without specialized cooling or high electricity costs.

The L4 is the best hardware for local AI agents in 2025 for edge scenarios. Whether it's a smart factory or an on-premise medical imaging server, the L4’s thermal profile and enterprise support lifecycle make it superior to consumer hardware.

The RTX 4090 is significantly faster in terms of raw compute and memory bandwidth (1 TB/s vs 300 GB/s). However, the 4090 is a 450W triple-slot card that is difficult to stack in servers. The L4 offers:

The L4 is the direct successor to the T4. It offers up to 2.5x the performance in AI inference and significantly better video encoding capabilities. If you are currently running T4 instances in the cloud (like AWS G4dn), moving to L4 (G6 instances) provides a massive uplift in tokens per second and allows for the use of FP8 precision.

While AMD’s MI300 series is competitive at the high end, NVIDIA remains the standard for local and mid-tier inference. The L4 benefits from the mature CUDA ecosystem and TensorRT, which generally offer better out-of-the-box optimization for new models like DeepSeek-R1 or Llama 3.1 compared to AMD's ROCm. For practitioners who want "plug and play" compatibility with the widest range of GitHub repositories and model architectures, the L4 is the safer bet.

Qwen3-30B-A3BAlibaba | 30B(3B active) | SS | 44.8 tok/s | 5.4 GB | |

| 9B | SS | 40.2 tok/s | 6.0 GB | ||

| 8B | SS | 42.6 tok/s | 5.7 GB | ||

Qwen3.6 35B-A3BAlibaba | 35B(3B active) | AA | 28.3 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba | 35B(3B active) | AA | 28.3 tok/s | 8.5 GB | |

Llama 2 7B ChatMeta | 7B | AA | 50.4 tok/s | 4.8 GB | |

Mistral 7B InstructMistral AI | 7B | AA | 37.8 tok/s | 6.4 GB | |

Llama 2 13B ChatMeta | 13B | AA | 28.5 tok/s | 8.5 GB | |

| Ad | |||||

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | AA | 21.3 tok/s | 11.4 GB | |

Gemma 4 E4B ITGoogle | 4B | AA | 34.9 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | AA | 34.9 tok/s | 6.9 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | AA | 21.9 tok/s | 11.0 GB | |

Gemma 4 E2B ITGoogle | 2B | AA | 65.1 tok/s | 3.7 GB | |

| 8B | AA | 18.1 tok/s | 13.3 GB | ||

minimax-m2.5MiniMax | 230B(10B active) | BB | 10.6 tok/s | 22.7 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | DD | 9.9 tok/s | 24.4 GB | |

| Ad | |||||

Qwen3.5-9BAlibaba | 9B | DD | 9.8 tok/s | 24.6 GB | |

Mistral Small 3 24BMistral AI | 24B | FF | 6.2 tok/s | 39.0 GB | |

Qwen3.6-27BAlibaba | 27B | FF | 3.3 tok/s | 72.8 GB | |

Gemma 3 27B ITGoogle | 27B | FF | 5.5 tok/s | 43.8 GB | |

Qwen3.5-27BAlibaba | 27B | FF | 3.3 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | FF | 2.9 tok/s | 82.0 GB | |

Qwen3-32BAlibaba | 32.8B | FF | 4.5 tok/s | 53.9 GB | |

LLaMA 65BMeta | 65B | FF | 6.2 tok/s | 39.3 GB | |

| Ad | |||||

Llama 2 70B ChatMeta | 70B | FF | 5.6 tok/s | 43.4 GB | |