Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

NVIDIA's Blackwell-architecture data center GPU with 192GB HBM3e and 8 TB/s bandwidth. Dual-die design delivers roughly 4x the AI inference throughput of H100 with native FP4 support.

Sized for production serving of 70B–200B class models at full or lightly-quantized precision. Overkill for a homelab; right call when the workload pays for itself in token volume. Notably efficient for its compute class — strong perf-per-watt makes it a natural pick for always-on inference.

Generated from this product’s spec sheet. Editor reviews refine it over time.

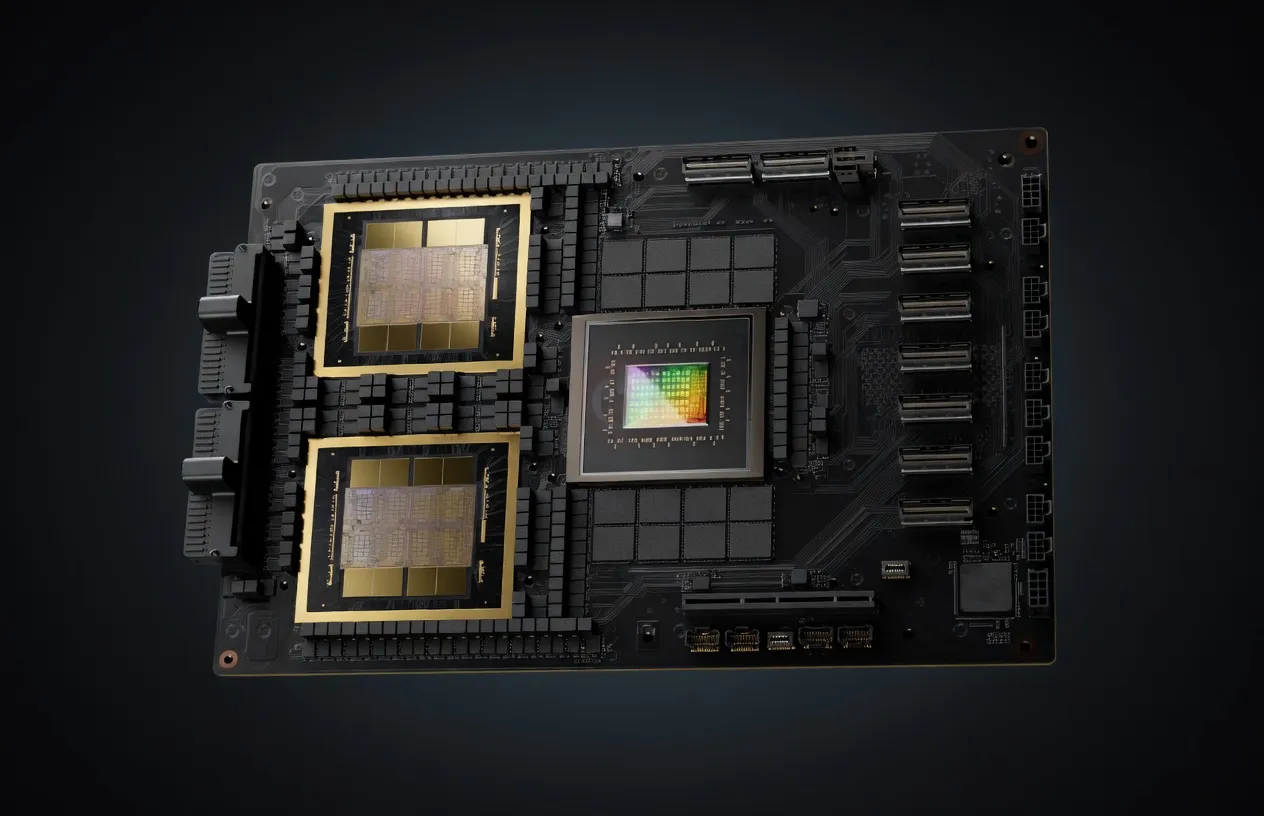

The NVIDIA B200 GPU is the centerpiece of the Blackwell architecture, representing the most significant leap in data center compute since the introduction of the H100. Designed specifically for the massive compute requirements of generative AI and agentic workflows, the B200 transitions from a monolithic silicon design to a dual-die, multi-chip module (MCM) approach. This architecture is engineered to solve the primary bottleneck in modern AI: the memory wall.

Manufactured on the custom TSMC 4NP process, the B200 houses 208 billion transistors. It is positioned as the premier enterprise-grade solution for training and deploying frontier models. While the H100 defined the LLM explosion of 2023-2024, the B200 is built for the "Agentic Era," where high-throughput inference and real-time reasoning are the primary requirements. It competes directly with the AMD Instinct MI325X and specialized ASICs, though NVIDIA’s software ecosystem (CUDA, TensorRT) remains its most formidable competitive advantage.

For AI engineers, the most critical upgrade in the B200 is the introduction of the second-generation Transformer Engine with native FP4 support. This allows for a massive increase in throughput without the typical accuracy degradation seen in lower-precision formats.

The B200 features 192GB of HBM3e memory. While the capacity is a significant jump from the H100 (80GB), the real story is the 8,000 GB/s (8 TB/s) of memory bandwidth. In LLM inference, performance is almost always memory-bandwidth bound rather than compute-bound. This 8 TB/s throughput ensures that the B200 can feed the compute cores fast enough to maintain high tokens-per-second (TPS) even on models with massive parameter counts.

By moving to FP4, the B200 delivers roughly 4x the AI inference performance of the H100. This is achieved through the dual-die design, which acts as a single unified GPU to the software stack, connected by a 10 TB/s chip-to-chip interconnect.

The B200 utilizes NVLink 5.0, providing 1,800 GB/s of bidirectional bandwidth. This is essential for multi-GPU clusters running models that exceed 192GB of VRAM. However, this performance comes with a high thermal cost; the B200 has a TDP of 1000W. For most production deployments, liquid cooling is not just recommended—it is a requirement to prevent thermal throttling and maintain the reliability needed for 24/7 inference servers.

The NVIDIA B200 GPU VRAM for large language models changes the math for single-GPU deployments. With 192GB of HBM3e, the B200 is the first single-card solution capable of hosting ultra-large models that previously required a 4-GPU or 8-GPU node.

While actual TPS depends on the software stack (TensorRT-LLM vs. vLLM), the B200's 8 TB/s bandwidth allows for:

The "sweet spot" for the B200 is FP8. The Blackwell architecture is optimized for FP8 and FP4; using these precisions provides the best quality-to-speed tradeoff, effectively doubling or quadrupling throughput over traditional FP16 without noticeable loss in benchmark scores for most LLM tasks.

The NVIDIA B200 GPU for AI is not a consumer product. With an MSRP of $40,000 and a 1000W power draw, it is targeted at specific high-stakes environments.

The B200 is the best AI chip for local deployment of agentic systems. Agents require low-latency "thinking" cycles. If an agent needs to call an LLM five times to complete one task, a slow GPU creates a bottleneck that makes the agent feel unresponsive. The B200’s high inference performance minimizes this latency.

For organizations that cannot send data to OpenAI or Anthropic due to compliance (HIPAA, GDPR), the B200 provides the necessary horsepower to run frontier-level models in a private data center. It is the best hardware for local AI agents in 2025 for teams that need to scale to thousands of concurrent users.

While the B200 is an inference powerhouse, its 192GB VRAM makes it an exceptional tool for fine-tuning. Researchers can perform Parameter-Efficient Fine-Tuning (PEFT) on 405B models or full fine-tuning on 70B models without the complexity of sharding across dozens of smaller GPUs.

When evaluating the B200, practitioners typically look at two alternatives: the previous generation H100 and AMD’s flagship offerings.

The H100 remains a capable card, but the B200 renders it obsolete for high-throughput inference. The B200 offers 2.4x more VRAM (192GB vs 80GB) and significantly higher bandwidth. For running 405B parameter models, you would need at least five H100s to match the VRAM capacity of a single B200, making the B200 more cost-effective and power-efficient on a per-token basis.

The AMD MI325X is a strong competitor in terms of raw hardware, often offering more VRAM (up to 256GB). However, the NVIDIA B200 GPU AI inference performance is bolstered by the 2nd Gen Transformer Engine and the FP4 data type, which AMD currently lacks native equivalent optimization for. Furthermore, NVIDIA’s software maturity—specifically the ease of deploying models via NIMs (NVIDIA Inference Microservices)—gives it the edge for teams that need production-ready reliability today.

There is no comparison in a professional setting. While an RTX 5090 might be one of the best NVIDIA GPUs for running AI models locally at a hobbyist level, it lacks the HBM3e bandwidth, NVLink scale-out capabilities, and VRAM capacity (typically 24GB-32GB) required for enterprise agentic workloads or frontier model inference. The B200 is designed for 24/7 duty cycles; consumer cards are not.

| 70B | SS | 57.1 tok/s | 112.8 GB | ||

| 70B | SS | 57.1 tok/s | 112.8 GB | ||

Nvidia Nemotron 3 SuperNVIDIA | 120B(12B active) | SS | 62.2 tok/s | 103.5 GB | |

DeepSeek-V4-FlashDeepSeek | 284B(13B active) | SS | 57.5 tok/s | 112.0 GB | |

Llama 4 MaverickMeta | 400B(17B active) | SS | 44.0 tok/s | 146.4 GB | |

GLM-5Z.ai | 744B(40B active) | SS | 73.4 tok/s | 87.7 GB | |

GLM-5.1Z.ai | 744B(40B active) | SS | 73.4 tok/s | 87.7 GB | |

Kimi K2.6Moonshot AI | 1000B(32B active) | SS | 74.7 tok/s | 86.2 GB | |

| Ad | |||||

Kimi K2 Instruct 0905Moonshot AI | 1000B(32B active) | SS | 76.1 tok/s | 84.6 GB | |

Kimi K2 ThinkingMoonshot AI | 1000B(32B active) | SS | 76.1 tok/s | 84.6 GB | |

Kimi K2.5Moonshot AI | 1000B(32B active) | SS | 76.1 tok/s | 84.6 GB | |

Falcon 180BTechnology Innovation Institute | 180B | SS | 59.7 tok/s | 107.8 GB | |

GLM-4.6Z.ai | 355B(32B active) | SS | 91.7 tok/s | 70.3 GB | |

Gemma 4 31B ITGoogle | 31B | SS | 78.6 tok/s | 82.0 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 97.2 tok/s | 66.3 GB | |

Qwen3.6-27BAlibaba | 27B | SS | 88.5 tok/s | 72.8 GB | |

| Ad | |||||

Qwen3.5-27BAlibaba | 27B | SS | 88.5 tok/s | 72.8 GB | |

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

GLM-4.7Z.ai | 358B(32B active) | SS | 122.4 tok/s | 52.6 GB | |

GLM-4.5Z.ai | 355B(32B active) | SS | 124.3 tok/s | 51.8 GB | |

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 124.3 tok/s | 51.8 GB | |

| Ad | |||||

Qwen3.5-397B-A17BAlibaba | 397B(17B active) | SS | 140.0 tok/s | 46.0 GB | |