Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

Memory-upgraded Hopper GPU with 141GB HBM3e and 4.8 TB/s bandwidth. Same compute as H100 but critical VRAM increase enables serving larger models on fewer GPUs.

Sized for production serving of 70B–200B class models at full or lightly-quantized precision. Overkill for a homelab; right call when the workload pays for itself in token volume. Notably efficient for its compute class — strong perf-per-watt makes it a natural pick for always-on inference.

Generated from this product’s spec sheet. Editor reviews refine it over time.

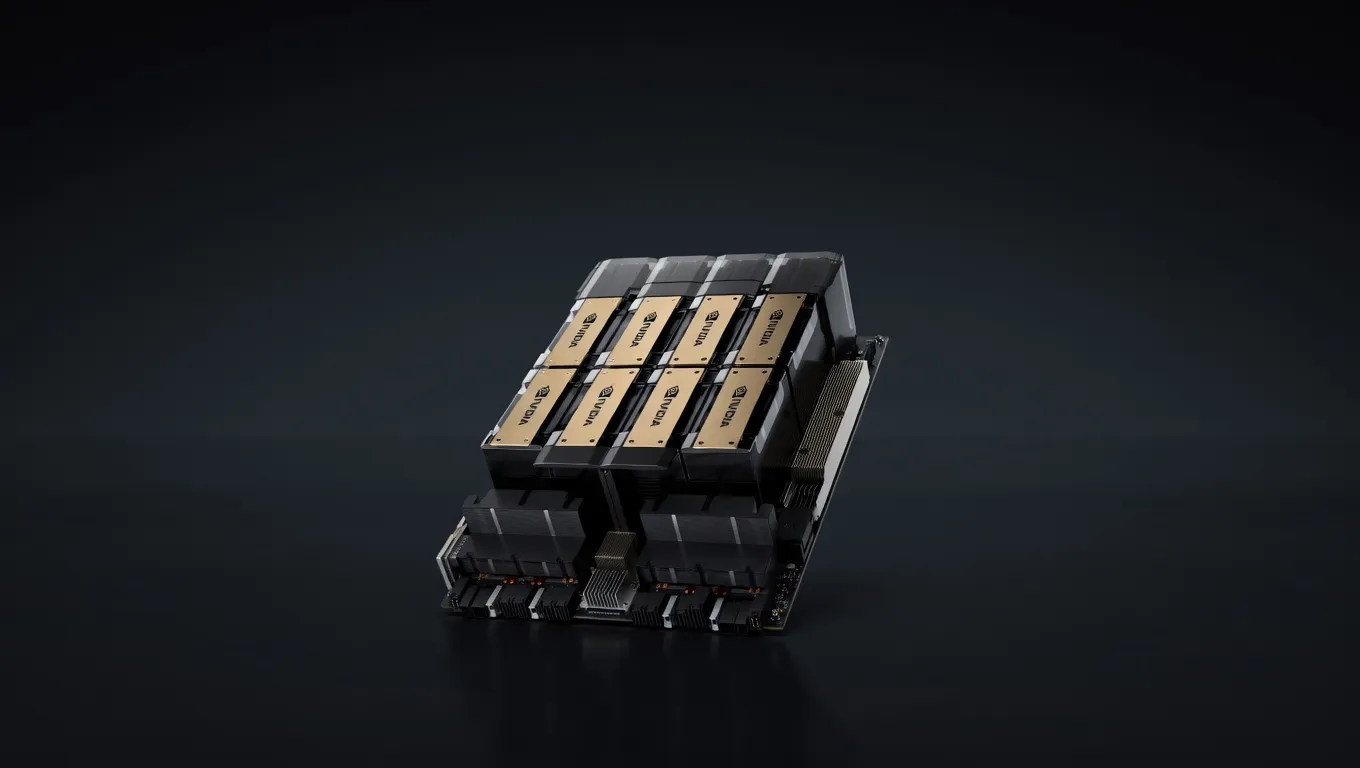

The NVIDIA H200 SXM 141GB represents the current ceiling for single-GPU inference performance within the Hopper architecture. While the underlying compute engine remains identical to the H100, the H200 introduces a critical upgrade to HBM3e memory, increasing capacity to 141GB and bandwidth to 4.8 TB/s. For engineers building agentic workflows or deploying frontier-class models, this hardware is the industry standard for high-throughput production environments.

Manufactured by NVIDIA on the TSMC 4N process, the H200 SXM is a data-center grade GPU designed for dense compute clusters. It occupies the top tier of the market, competing primarily against the AMD Instinct MI300X. While consumer cards like the RTX 4090 are suitable for prototyping, the H200 is built for teams running 24/7 inference servers where VRAM density and NVLink interconnect speeds are the primary bottlenecks.

The defining characteristic of the NVIDIA H200 SXM 141GB for AI is its memory subsystem. In LLM inference, the "memory wall" is often the limiting factor for tokens per second (TPS). By providing 4800 GB/s of memory bandwidth—a 1.4x increase over the H100—the H200 significantly reduces the latency of autoregressive decoding.

For AI development, the 141GB VRAM for large language models is a transformative threshold. It allows for the deployment of massive models on a single node that previously required complex multi-GPU sharding. When evaluating NVIDIA H200 SXM 141GB AI inference performance, the inclusion of the 4th Generation Tensor Cores and the dedicated Transformer Engine (supporting FP8) ensures that the hardware remains optimized for the next generation of sparse and quantized model architectures.

The H200 is specifically designed to handle the largest open-weights models currently available. Its 141GB capacity changes the math for local LLM deployment, particularly for long-context applications and high-precision inference.

For a 70B parameter model at FP8, the NVIDIA H200 SXM 141GB tokens per second performance is approximately 2x faster than an A100 80GB, largely due to the combination of the Transformer Engine and HBM3e bandwidth. It is the premier hardware for running 180B at FP16 single-GPU or 405B at FP8 parameter models in a clustered environment.

The H200 SXM is not a hobbyist card for a home office; it is production-ready infrastructure for those building the next generation of AI agents.

For teams moving from R&D to production, the H200 is the most reliable path. Its support for NVIDIA’s full software stack (CUDA, TensorRT-LLM, Triton Inference Server) ensures that deployment pipelines are robust. It is the best hardware for local AI agents 2025 where low-latency response times are non-negotiable.

While primarily marketed for inference, the H200 is the best AI GPU for agent training and fine-tuning. The 141GB VRAM allows for larger batch sizes and longer sequence lengths during Supervised Fine-Tuning (SFT) or Reinforcement Learning from Human Feedback (RLHF). This is critical for agents that need to maintain state over long trajectories.

For organizations looking to internalize their AI spend and move away from closed-source APIs, a cluster of H200s provides the necessary density to serve thousands of requests per minute. The SXM form factor, integrated via HGX boards, allows for 900 GB/s NVLink speeds, making the entire cluster behave as a single, massive computational unit.

When choosing the best NVIDIA GPUs for running AI models locally or in a private cloud, the H200 sits at the top of the hierarchy, but it faces competition in specific niches.

The H100 is the direct predecessor. The H200 offers the same compute (TFLOPS) but provides nearly double the VRAM capacity (141GB vs 80GB) and significantly higher bandwidth (4.8 TB/s vs 3.35 TB/s). If your workload is compute-bound (small models, large batches), the H100 may suffice. If your workload is memory-bound (large LLMs, long context, high TPS), the H200 is the mandatory upgrade.

The AMD MI300X is the primary competitor in the data center space, offering 192GB of VRAM and 5.3 TB/s bandwidth. On paper, the AMD card offers more memory for a lower MSRP. However, the NVIDIA H200 maintains an advantage in software ecosystem maturity. For practitioners, the "NVIDIA vs AMD for AI inference" debate often comes down to software: NVIDIA’s TensorRT-LLM and the ubiquity of CUDA often result in faster time-to-deployment and better real-world optimization for agentic frameworks compared to AMD’s ROCm.

The Blackwell architecture is the successor to Hopper. While Blackwell offers significantly higher FP8 compute, the H200 remains the "in-stock" and stable choice for 2024-2025 deployments. For teams needing to ship production agents today, the H200 provides the most reliable performance-to-availability ratio.

GLM-4.6Z.ai | 355B(32B active) | SS | 55.0 tok/s | 70.3 GB | |

GLM-5Z.ai | 744B(40B active) | SS | 44.1 tok/s | 87.7 GB | |

GLM-5.1Z.ai | 744B(40B active) | SS | 44.1 tok/s | 87.7 GB | |

Kimi K2.6Moonshot AI | 1000B(32B active) | SS | 44.8 tok/s | 86.2 GB | |

Kimi K2 Instruct 0905Moonshot AI | 1000B(32B active) | SS | 45.7 tok/s | 84.6 GB | |

Kimi K2 ThinkingMoonshot AI | 1000B(32B active) | SS | 45.7 tok/s | 84.6 GB | |

Kimi K2.5Moonshot AI | 1000B(32B active) | SS | 45.7 tok/s | 84.6 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 58.3 tok/s | 66.3 GB | |

| Ad | |||||

Carnice-V2-27bkai-os | 27B | SS | 53.1 tok/s | 72.8 GB | |

Qwen3.6-27BAlibaba | 27B | SS | 53.1 tok/s | 72.8 GB | |

Qwen3.5-27BAlibaba | 27B | SS | 53.1 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | SS | 47.1 tok/s | 82.0 GB | |

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 64.6 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 64.6 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 64.6 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 64.6 tok/s | 59.8 GB | |

| Ad | |||||

Nvidia Nemotron 3 SuperNVIDIA | 120B(12B active) | SS | 37.3 tok/s | 103.5 GB | |

GLM-4.7Z.ai | 358B(32B active) | SS | 73.4 tok/s | 52.6 GB | |

GLM-4.5Z.ai | 355B(32B active) | SS | 74.6 tok/s | 51.8 GB | |

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 74.6 tok/s | 51.8 GB | |

| 70B | SS | 84.6 tok/s | 45.7 GB | ||

Qwen3.5-397B-A17BAlibaba | 397B(17B active) | SS | 84.0 tok/s | 46.0 GB | |

Qwen3-32BAlibaba | 32.8B | SS | 71.7 tok/s | 53.9 GB | |

Qwen 3.5 OmniAlibaba | 397B(17B active) | SS | 85.5 tok/s | 45.2 GB | |

| Ad | |||||

Llama 2 70B ChatMeta | 70B | SS | 89.0 tok/s | 43.4 GB | |