Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

Professional Ada Lovelace workstation GPU with 48GB GDDR6 ECC memory. Designed for AI development, scientific visualization, and professional rendering with enterprise driver support.

The first tier where 70B-class models stop feeling cramped. Headroom for KV cache means 32K+ context on Q4 quants without falling off the GPU. Notably efficient for its compute class — strong perf-per-watt makes it a natural pick for always-on inference.

Generated from this product’s spec sheet. Editor reviews refine it over time.

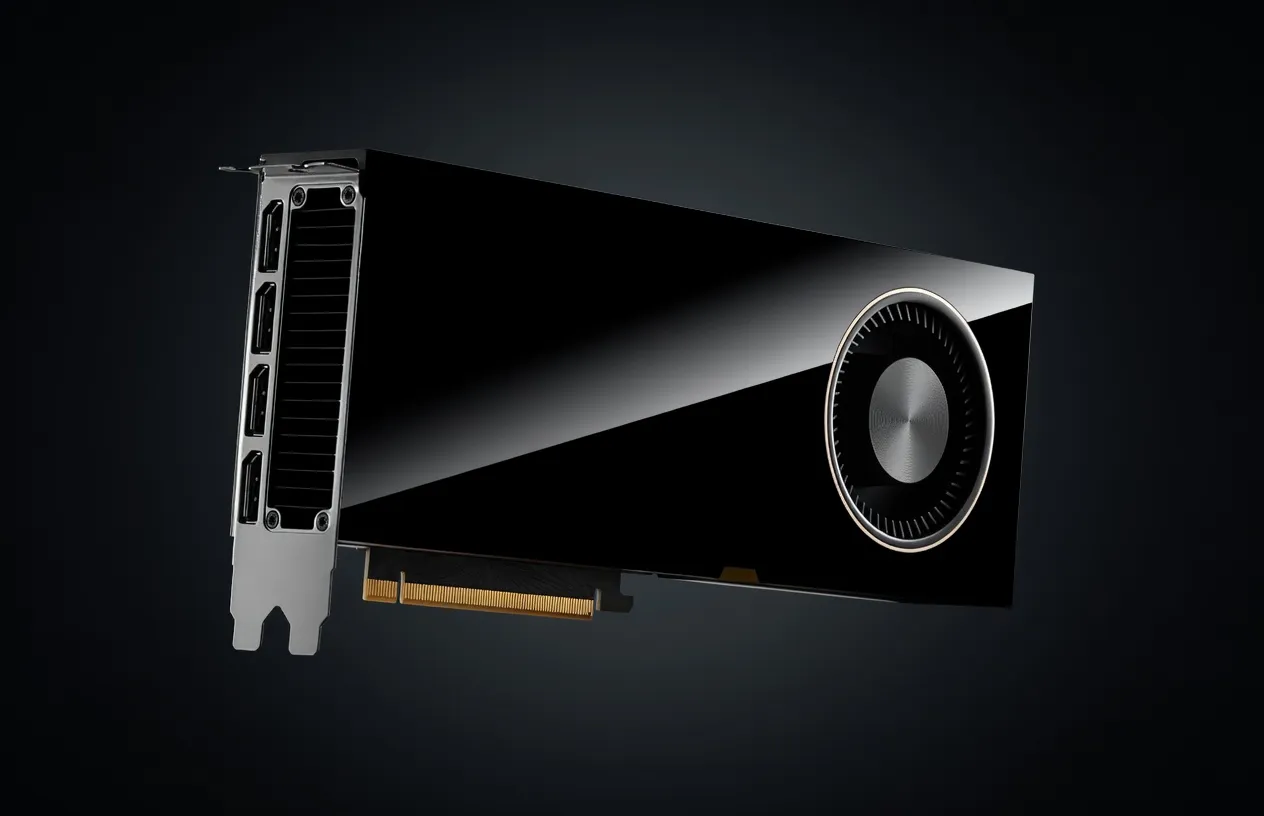

The NVIDIA RTX 6000 Ada Generation is the flagship professional workstation GPU in NVIDIA’s lineup, positioned as the definitive choice for engineers who require maximum VRAM without moving to data-center-class H100 or B200 hardware. Built on the Ada Lovelace (AD102) architecture, this card bridges the gap between high-end consumer hardware and enterprise-grade server clusters.

While the consumer-facing GeForce RTX 4090 shares the same AD102 silicon, the RTX 6000 Ada is a different beast for AI development. It features a fully enabled core count, double the VRAM at 48GB GDDR6, and enterprise-grade drivers certified for stability in production environments. For teams building agentic workflows or researchers fine-tuning local models, this is the most powerful single-slot-compatible card currently available for local AI inference and development.

For AI workloads, VRAM is the primary bottleneck. The RTX 6000 Ada provides 48GB of GDDR6 memory with ECC (Error Correction Code), which is critical for long-running training jobs or inference servers where bit-flip errors can crash a production pipeline. With a 384-bit memory bus providing 960 GB/s of bandwidth, this card ensures that weights move from VRAM to the compute cores fast enough to maintain high tokens-per-second (t/s) during autoregressive generation.

The raw compute numbers are staggering for a workstation card:

The 4th Gen Tensor Cores include specialized support for the FP8 data format, which reduces memory footprints and increases throughput for modern LLMs compared to standard FP16. In terms of power efficiency, the card has a TDP of 300W, which is significantly lower than the 450W+ seen on the RTX 4090. This makes the RTX 6000 Ada easier to cool in multi-GPU workstation configurations (e.g., a 4-card setup in a single tower).

When evaluating NVIDIA GPUs for AI development, practitioners often compare the 6000 Ada to its predecessor (the A6000) or the consumer 4090. The 6000 Ada offers roughly 2x the performance of the previous-gen A6000 and doubles the VRAM of the 4090. While the 4090 uses faster GDDR6X memory, its 24GB limit is a non-starter for 70B parameter models, making the 6000 Ada the superior 48GB GPU for AI.

The RTX 6000 Ada is the "gold standard" for running 30B at FP16 and 70B at Q4 parameter models on a single card. Because LLM weights must reside entirely in VRAM for acceptable performance, the 48GB buffer dictates exactly what you can deploy.

With 48GB of VRAM, this is one of the best GPUs for computer vision and multimodal tasks. You can run Stable Diffusion XL or Flux.1 (Dev/Schnell) with large batch sizes for image generation. For video generation models like SVD (Stable Video Diffusion), the 48GB buffer is essential for generating high-resolution frames without Out-of-Memory (OOM) errors.

On optimized backends like vLLM or TensorRT-LLM:

The RTX 6000 Ada is arguably the best AI GPU for agent training and local deployment of agentic workflows. Agents often require multiple models to be resident in memory simultaneously (e.g., a primary LLM for reasoning, a smaller model for tool-calling, and an embedding model for RAG). The 48GB capacity allows developers to host this entire stack on a single GPU, reducing latency between agent steps.

For startups and enterprise teams, this is the production-ready choice. Unlike consumer cards, the RTX 6000 Ada supports vGPU (Virtual GPU) software, allowing a single card to be partitioned into multiple virtual workstations. This is ideal for teams providing remote access to AI dev environments.

While full parameter fine-tuning of 70B models requires a cluster, the RTX 6000 Ada is perfect for QLoRA or LoRA fine-tuning of 8B to 30B models. It provides the thermal stability and ECC memory required for training runs that last several days.

For the "prosumer" running a local LLM, the RTX 6000 Ada is the ultimate upgrade. It eliminates the need for complex "model splitting" across two 3090s or 4090s, which often introduces PCIe bottlenecking and increased power complexity.

The A100 is a data-center GPU designed for SXM or PCIe server racks. While the A100 80GB has more VRAM and higher bandwidth (HBM2e), the RTX 6000 Ada is significantly easier to deploy in a standard office environment because it is actively cooled (it has a fan). The A100 is passively cooled and requires a high-airflow server chassis. For local workstation use, the 6000 Ada is the practical choice.

Apple’s silicon offers more total memory for significantly less money, which is attractive for running massive 120B+ models. However, the RTX 6000 Ada wins on raw compute throughput and software compatibility. Most AI libraries (CUDA, TensorRT, Triton) are "NVIDIA first." If your workflow depends on specific CUDA kernels or you need the highest possible tokens per second for 70B models, the NVIDIA hardware remains the best AI chip for local deployment.

The AMD W7900 also offers 48GB of VRAM and is cheaper ($3,990 MSRP). However, the NVIDIA ecosystem (CUDA) is the industry standard. While AMD’s ROCm has made strides, NVIDIA’s TensorRT-LLM and deep integration with frameworks like PyTorch mean the RTX 6000 Ada provides a "plug-and-play" experience that AMD currently cannot match for professional AI development.

In the landscape of best hardware for local AI agents 2025, the NVIDIA RTX 6000 Ada Generation remains the peak of workstation performance. It provides the VRAM necessary for state-of-the-art open-source models while maintaining the reliability and support required by professional engineers.

minimax-m2.5MiniMax | 230B(10B active) | SS | 34.0 tok/s | 22.7 GB | |

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | SS | 68.0 tok/s | 11.4 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | SS | 70.2 tok/s | 11.0 GB | |

Qwen3.6 35B-A3BAlibaba | 35B(3B active) | SS | 90.6 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba | 35B(3B active) | SS | 90.6 tok/s | 8.5 GB | |

Qwen3.5-122B-A10BAlibaba | 122B(10B active) | SS | 28.3 tok/s | 27.3 GB | |

| 8B | SS | 58.0 tok/s | 13.3 GB | ||

Qwen3-30B-A3BAlibaba | 30B(3B active) | SS | 143.5 tok/s | 5.4 GB | |

| Ad | |||||

Llama 2 13B ChatMeta | 13B | SS | 91.3 tok/s | 8.5 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | SS | 31.7 tok/s | 24.4 GB | |

Qwen3.5-9BAlibaba | 9B | SS | 31.4 tok/s | 24.6 GB | |

| 9B | AA | 128.5 tok/s | 6.0 GB | ||

| 8B | AA | 136.4 tok/s | 5.7 GB | ||

Qwen3-235B-A22BAlibaba | 235B(22B active) | AA | 21.3 tok/s | 36.3 GB | |

Gemma 4 E4B ITGoogle | 4B | AA | 111.7 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | AA | 111.7 tok/s | 6.9 GB | |

| Ad | |||||

Mistral 7B InstructMistral AI | 7B | AA | 120.8 tok/s | 6.4 GB | |

Llama 2 7B ChatMeta | 7B | AA | 161.4 tok/s | 4.8 GB | |

Gemma 4 E2B ITGoogle | 2B | AA | 208.4 tok/s | 3.7 GB | |

Mistral Small 3 24BMistral AI | 24B | BB | 19.8 tok/s | 39.0 GB | |

LLaMA 65BMeta | 65B | BB | 19.7 tok/s | 39.3 GB | |

Llama 2 70B ChatMeta | 70B | BB | 17.8 tok/s | 43.4 GB | |

Mixtral 8x22B InstructMistral AI | 141B(39B active) | BB | 17.7 tok/s | 43.6 GB | |

Qwen 3.5 OmniAlibaba | 397B(17B active) | BB | 17.1 tok/s | 45.2 GB | |

| Ad | |||||

| 70B | BB | 16.9 tok/s | 45.7 GB | ||