Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

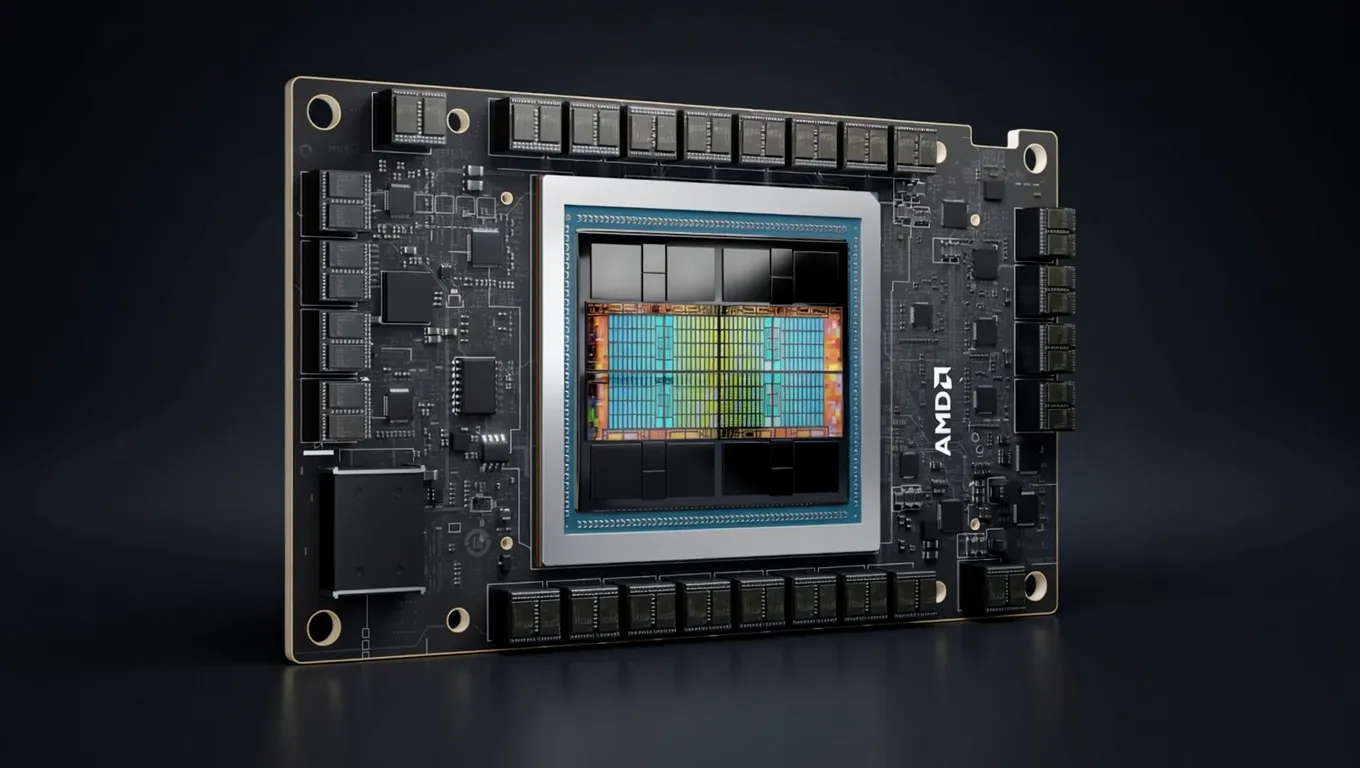

AMD's CDNA 3 data center GPU with 192GB HBM3 and 5.3 TB/s bandwidth. The largest single-GPU memory capacity in its class, ideal for serving large LLMs without multi-GPU parallelism.

Sized for production serving of 70B–200B class models at full or lightly-quantized precision. Overkill for a homelab; right call when the workload pays for itself in token volume. Notably efficient for its compute class — strong perf-per-watt makes it a natural pick for always-on inference.

Generated from this product’s spec sheet. Editor reviews refine it over time.

The AMD Instinct MI300X is a high-performance data center GPU designed specifically to challenge the dominance of the NVIDIA H100 in generative AI and large language model (LLM) workloads. Built on AMD’s CDNA 3 architecture, the MI300X is a "chiplet" masterpiece, utilizing 3D packaging to integrate 153 billion transistors. It is currently the most capable hardware for practitioners who need to run massive models on the fewest number of GPUs possible.

While typically deployed in OAM (OCP Accelerator Module) form factors within 8-way server nodes, the MI300X is increasingly relevant for specialized AI engineering teams and high-end labs. Its primary value proposition is simple: it offers the largest single-GPU memory capacity in its class. For those building agentic workflows or serving production-grade LLMs, the MI300X provides a viable, high-bandwidth alternative to the CUDA ecosystem, supported by the maturing ROCm 6.x software stack.

When evaluating the AMD Instinct MI300X for AI, the standout metric is the 192GB of HBM3 memory. This is 2.4x the capacity of an NVIDIA H100 (80GB), fundamentally changing the economics of LLM inference.

For AI inference, memory bandwidth is the primary bottleneck for token generation speed. The MI300X delivers 5.3 TB/s of memory bandwidth via an 8192-bit memory bus. In practical terms, this allows for incredibly high throughput during the "decoding" phase of LLM generation. When comparing NVIDIA vs AMD for AI inference, the MI300X often leads in raw throughput for high-batch scenarios because it can fit larger models entirely in HBM, avoiding the latency penalties of multi-GPU communication.

On the compute side, the MI300X is a powerhouse:

With 304 Compute Units and 1,216 Matrix Cores, the MI300X is optimized for the matrix multiplication operations that define transformer architectures. While its 750W TDP is significant, the power-to-performance ratio is competitive when you consider that a single MI300X can often replace two smaller GPUs to run the same model.

The 192GB VRAM capacity makes the MI300X the best hardware for local AI agents 2025 and large-scale model serving. It eliminates the need for complex tensor parallelism for most state-of-the-art models.

The MI300X is ideal for long-context tasks (e.g., 128k context windows). Because the KV cache consumes VRAM linearly with context length, the 192GB buffer allows developers to process entire codebases or long legal documents without running out of memory (OOM).

The AMD Instinct MI300X for AI is not a consumer card; it is a specialized tool for production-grade environments and advanced AI development.

For startups and enterprises building LLM-backed products, the MI300X offers a lower Total Cost of Ownership (TCO) per request. By fitting larger models on fewer GPUs, you reduce the complexity of your orchestration layer and decrease the latency introduced by inter-GPU communication.

The MI300X is arguably the best AI GPU for agent training and fine-tuning (LoRA/QLoRA). The 192GB VRAM allows for larger batch sizes and longer sequences during training, which is critical for teaching agents to handle multi-step reasoning and tool-use scripts.

While "local" usually implies a desktop, high-end workstations equipped with OAM-to-PCIe bridges or specialized liquid-cooled chassis allow researchers to run a 192GB GPU for AI development without relying on cloud providers. This is essential for teams working with sensitive data who require the highest possible privacy and zero data leakage.

The MI300X exists in a competitive landscape dominated by NVIDIA, but it carves out a specific niche based on memory density.

The H100 is the industry standard, benefiting from the mature CUDA ecosystem. However, the MI300X offers more than double the VRAM (192GB vs 80GB) and significantly higher memory bandwidth (5.3 TB/s vs 3.35 TB/s). If your workload is memory-bound—which most LLM inference is—the MI300X often provides better price-to-performance. The trade-off is the software; while ROCm 6.x has made massive strides in supporting PyTorch and vLLM, it still requires more configuration than the "plug-and-play" nature of CUDA.

The H200 is NVIDIA's response to the MI300X, featuring 141GB of HBM3e. Even against the H200, the MI300X maintains a 51GB VRAM advantage. For practitioners running the largest 70B+ parameter models at high precision, the MI300X remains the best AI chip for local deployment when VRAM capacity is the deciding factor.

A common concern for AMD GPUs for AI development is software compatibility. As of 2024/2025, the ROCm 6.x stack provides native support for:

For engineers building agentic workflows, the MI300X represents the pinnacle of high-throughput, high-memory hardware, enabling the next generation of local AI deployment without the "VRAM tax" typically associated with entry-level enterprise hardware.

Nvidia Nemotron 3 SuperNVIDIA | 120B(12B active) | SS | 41.2 tok/s | 103.5 GB | |

GLM-5Z.ai | 744B(40B active) | SS | 48.6 tok/s | 87.7 GB | |

GLM-5.1Z.ai | 744B(40B active) | SS | 48.6 tok/s | 87.7 GB | |

Kimi K2.6Moonshot AI | 1000B(32B active) | SS | 49.5 tok/s | 86.2 GB | |

Kimi K2 Instruct 0905Moonshot AI | 1000B(32B active) | SS | 50.4 tok/s | 84.6 GB | |

Kimi K2 ThinkingMoonshot AI | 1000B(32B active) | SS | 50.4 tok/s | 84.6 GB | |

Kimi K2.5Moonshot AI | 1000B(32B active) | SS | 50.4 tok/s | 84.6 GB | |

DeepSeek-V4-FlashDeepSeek | 284B(13B active) | SS | 38.1 tok/s | 112.0 GB | |

| Ad | |||||

| 70B | SS | 37.8 tok/s | 112.8 GB | ||

| 70B | SS | 37.8 tok/s | 112.8 GB | ||

GLM-4.6Z.ai | 355B(32B active) | SS | 60.7 tok/s | 70.3 GB | |

Gemma 4 31B ITGoogle | 31B | SS | 52.1 tok/s | 82.0 GB | |

Falcon 180BTechnology Innovation Institute | 180B | SS | 39.6 tok/s | 107.8 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 64.4 tok/s | 66.3 GB | |

Qwen3.6-27BAlibaba | 27B | SS | 58.6 tok/s | 72.8 GB | |

Qwen3.5-27BAlibaba | 27B | SS | 58.6 tok/s | 72.8 GB | |

| Ad | |||||

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 71.3 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 71.3 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 71.3 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 71.3 tok/s | 59.8 GB | |

GLM-4.7Z.ai | 358B(32B active) | SS | 81.1 tok/s | 52.6 GB | |

GLM-4.5Z.ai | 355B(32B active) | SS | 82.3 tok/s | 51.8 GB | |

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 82.3 tok/s | 51.8 GB | |

Qwen3.5-397B-A17BAlibaba | 397B(17B active) | SS | 92.7 tok/s | 46.0 GB | |

| Ad | |||||

| 70B | SS | 93.4 tok/s | 45.7 GB | ||