Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

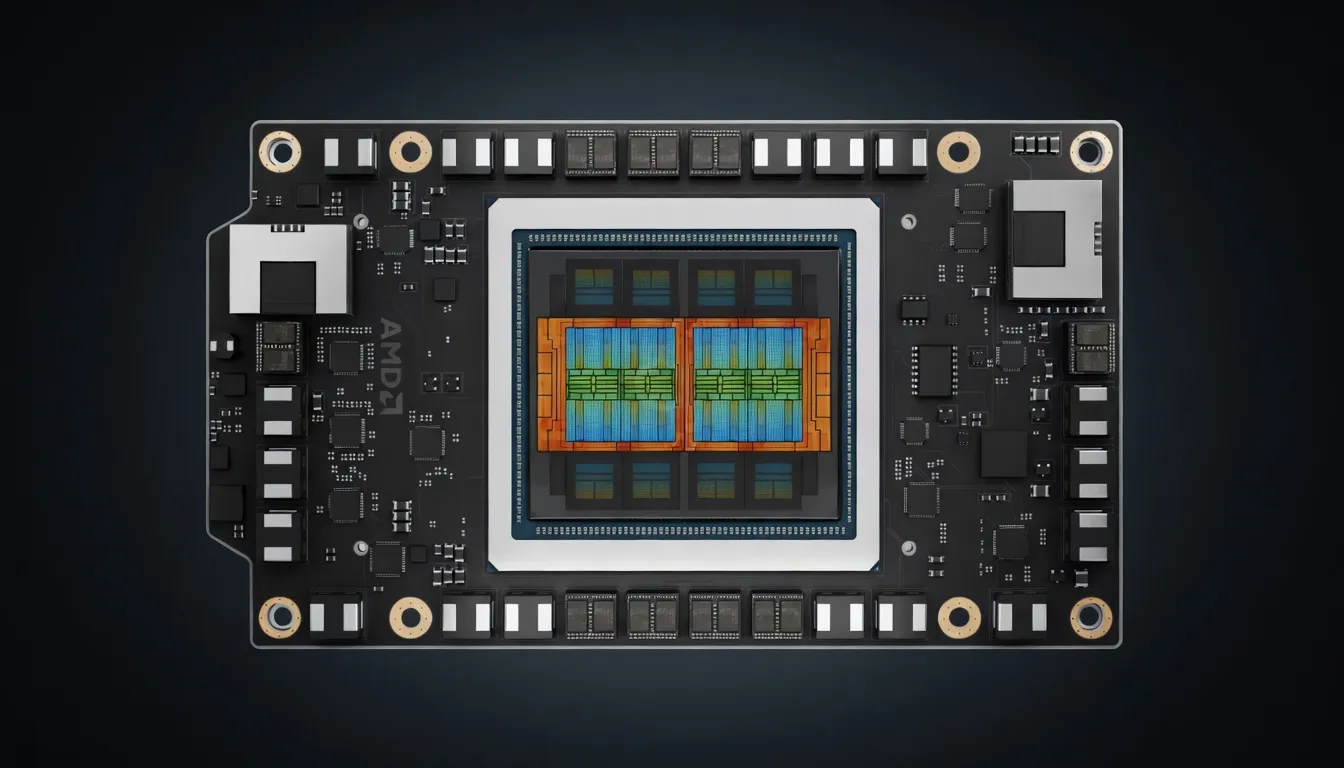

AMD's CDNA 4 architecture data center GPU with 288GB HBM3e and 8 TB/s bandwidth. Features native FP4/FP6 support delivering 20+ PFLOPS for AI inference. AMD's answer to NVIDIA B200.

Sized for production serving of 70B–200B class models at full or lightly-quantized precision. Overkill for a homelab; right call when the workload pays for itself in token volume. High TDP — plan for adequate cooling and a beefy PSU; not the right pick for compact desktops.

Generated from this product’s spec sheet. Editor reviews refine it over time.

The AMD Instinct MI355X represents a generational leap in data center silicon, specifically engineered to address the memory wall and compute bottlenecks of the transformer era. Built on the new CDNA 4 architecture, the MI355X is AMD's direct response to the NVIDIA Blackwell B200, designed to provide the massive VRAM overhead and high-throughput compute required for frontier-scale model serving.

Unlike previous generations that focused primarily on FP16/BF16 performance, the MI355X introduces native hardware support for FP4 and FP6 data types. This architectural shift allows for a 35x theoretical performance increase over the MI300X in specific inference tasks, delivering over 20 PFLOPS of FP4 compute. For AI engineers and infrastructure teams, this translates to significantly higher tokens per second (TPS) and lower latency for long-context windows. This is a pure-play data center GPU, utilizing the OAM (OCP Accelerator Module) form factor and requiring liquid cooling, making it a "production-ready" choice for clusters rather than a workstation-class device.

When evaluating the AMD Instinct MI355X for AI, the primary metrics are memory capacity, bandwidth, and the efficiency of low-precision arithmetic. The MI355X excels in all three, specifically targeting the bottlenecks found in the KV cache of large language models.

The MI355X features an industry-leading 288GB of HBM3e memory. In the context of NVIDIA vs AMD for AI inference, this capacity is a critical differentiator. A single MI355X can host a 400B+ parameter model at 4-bit quantization without needing to split the model across multiple nodes. With 8,000 GB/s (8 TB/s) of memory bandwidth, the MI355X minimizes the "memory-bound" phase of token generation, ensuring that the GPU's compute units are not starved for data during the autoregressive decoding process.

The CDNA 4 architecture provides a significant uplift in throughput:

The TDP of 1400W is a clear indicator of the MI355X's performance tier. This power density necessitates advanced liquid cooling solutions. For teams building AI-powered applications, this means the MI355X is best suited for dedicated AI servers or colocation environments rather than standard office racks.

The 288GB GPU for AI workload capacity changes the math for model deployment. On the MI355X, the "Frontier-scale" models that previously required an 8-GPU cluster can now be served with significantly fewer modules.

The sweet spot for the MI355X is FP6 or FP4 native quantization. While FP16/BF16 remains the standard for training and fine-tuning, the MI355X’s native support for lower precisions means you can run models at 4-bit with minimal perplexity degradation while doubling or quadrupling the throughput compared to 8-bit or 16-bit deployments.

The 8 TB/s bandwidth is particularly beneficial for multimodal models (like GPT-4o style vision-language models) and long-context RAG (Retrieval-Augmented Generation) workflows. The MI355X can ingest massive document sets into the KV cache and process them with lower time-to-first-token (TTFT) than previous generation hardware.

The MI355X is not a consumer card; it is designed for the infrastructure that powers AI agents and large-scale inference services.

The MI355X enters a highly competitive landscape, primarily measured against NVIDIA’s H200 and B200.

Compared to its predecessor, the MI355X is a massive upgrade in memory capacity (288GB vs 192GB) and introduces the FP4/FP6 compute engines that were absent in the MI300X. For practitioners, this means the MI355X is roughly 2-3x faster for inference-heavy workloads using modern quantization techniques.

The MI355X is currently one of the best AMD GPUs for running AI models locally at an enterprise or research scale. If your workload demands the highest possible VRAM for frontier-scale models and you are prepared for liquid-cooled infrastructure, the MI355X is the leading non-NVIDIA alternative for high-throughput AI production.

Llama 4 MaverickMeta | 400B(17B active) | SS | 44.0 tok/s | 146.4 GB | |

| 70B | SS | 57.1 tok/s | 112.8 GB | ||

| 70B | SS | 57.1 tok/s | 112.8 GB | ||

DeepSeek-V4-FlashDeepSeek | 284B(13B active) | SS | 57.5 tok/s | 112.0 GB | |

Nvidia Nemotron 3 SuperNVIDIA | 120B(12B active) | SS | 62.2 tok/s | 103.5 GB | |

GLM-5Z.ai | 744B(40B active) | SS | 73.4 tok/s | 87.7 GB | |

GLM-5.1Z.ai | 744B(40B active) | SS | 73.4 tok/s | 87.7 GB | |

Kimi K2.6Moonshot AI | 1000B(32B active) | SS | 74.7 tok/s | 86.2 GB | |

| Ad | |||||

Kimi K2 Instruct 0905Moonshot AI | 1000B(32B active) | SS | 76.1 tok/s | 84.6 GB | |

Kimi K2 ThinkingMoonshot AI | 1000B(32B active) | SS | 76.1 tok/s | 84.6 GB | |

Kimi K2.5Moonshot AI | 1000B(32B active) | SS | 76.1 tok/s | 84.6 GB | |

Falcon 180BTechnology Innovation Institute | 180B | SS | 59.7 tok/s | 107.8 GB | |

GLM-4.6Z.ai | 355B(32B active) | SS | 91.7 tok/s | 70.3 GB | |

Mistral Large 2Mistral AI | 123B | SS | 32.6 tok/s | 197.8 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 97.2 tok/s | 66.3 GB | |

Gemma 4 31B ITGoogle | 31B | SS | 78.6 tok/s | 82.0 GB | |

| Ad | |||||

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 107.6 tok/s | 59.8 GB | |

Qwen3.6-27BAlibaba | 27B | SS | 88.5 tok/s | 72.8 GB | |

Qwen3.5-27BAlibaba | 27B | SS | 88.5 tok/s | 72.8 GB | |

GLM-4.7Z.ai | 358B(32B active) | SS | 122.4 tok/s | 52.6 GB | |

GLM-4.5Z.ai | 355B(32B active) | SS | 124.3 tok/s | 51.8 GB | |

| Ad | |||||

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 124.3 tok/s | 51.8 GB | |