Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

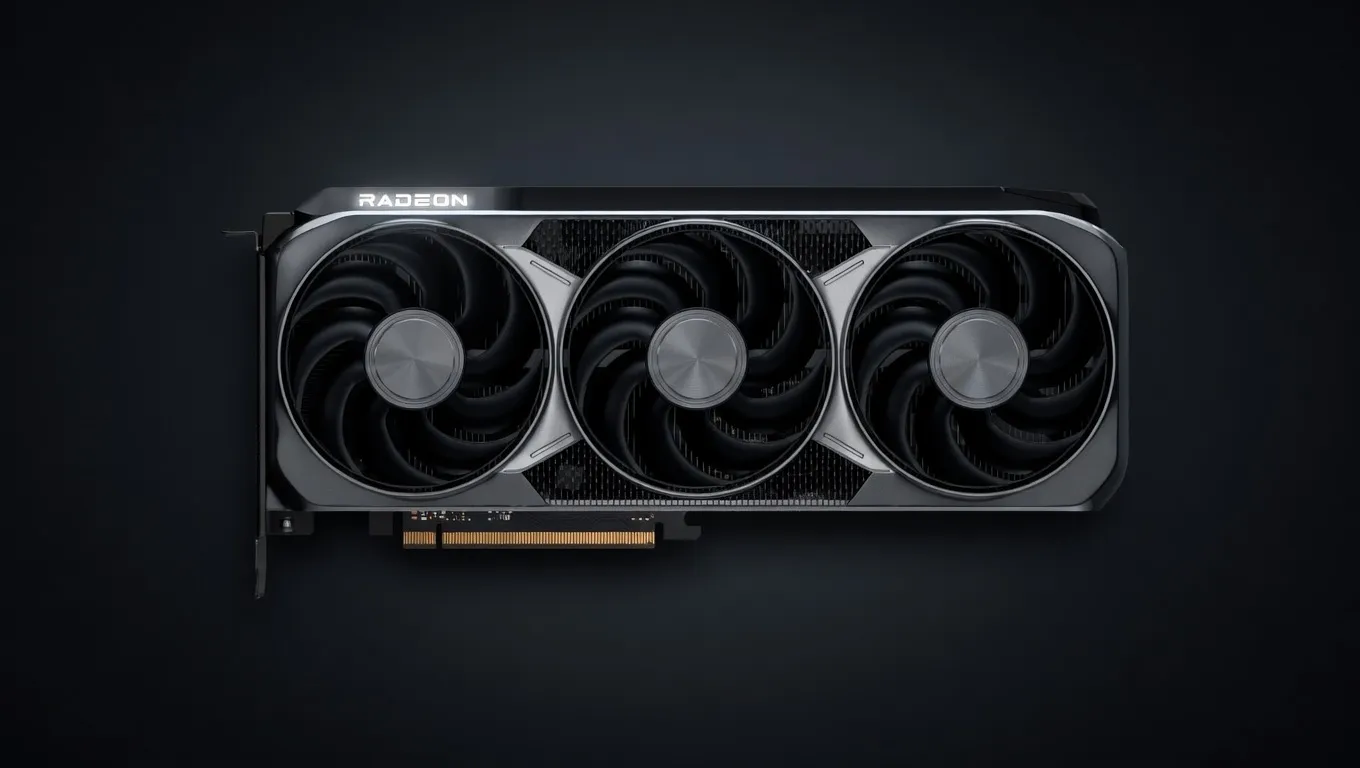

AMD's RDNA 4 flagship with 16GB GDDR6, 64 compute units, and dramatically improved ray tracing. Competes with RTX 5070 Ti at a lower price, with more VRAM than the RTX 5070.

Good balance for indie developers running local copilots and chat. 30B+ models are reachable but only with aggressive quantization and short context.

Generated from this product’s spec sheet. Editor reviews refine it over time.

The AMD Radeon RX 9070 XT represents a strategic shift in AMD’s RDNA 4 architecture, prioritizing high-throughput efficiency and competitive VRAM pricing for the mid-to-high-end market. Built on TSMC’s 4nm process, this flagship Navi 48 silicon is designed to bridge the gap between consumer gaming hardware and entry-level AI workstations. For practitioners, the RX 9070 XT is a high-performance alternative to the NVIDIA RTX 50-series, specifically targeting the price-to-VRAM ratio that is critical for local LLM deployments.

Positioned as a premium consumer GPU, the RX 9070 XT competes directly with the RTX 5070 Ti. While it lacks the proprietary CUDA ecosystem, its 16GB of GDDR6 memory and 256-bit bus make it a formidable contender for AMD Radeon RX 9070 XT AI inference performance. For teams building agentic workflows or developers looking for the best hardware for local AI agents in 2025, this card offers a significant compute density of 48.7 FP32 TFLOPS and a massive leap in INT8 performance (1557 TOPS) compared to previous RDNA generations.

When evaluating the AMD Radeon RX 9070 XT for AI, the most critical metric for inference is memory bandwidth and VRAM capacity. The 16GB GDDR6 buffer on a 256-bit bus provides 640 GB/s of bandwidth. In the context of LLMs, bandwidth directly dictates the "tokens per second" (TPS) during the generation phase, as the model weights must be moved from VRAM to the compute units for every token produced.

The inclusion of 128 dedicated AI accelerators marks a significant refinement in the RDNA 4 architecture. These units are optimized for the matrix math required by transformer-based models. While RDNA 3 introduced these accelerators, RDNA 4 improves the instruction set for sparsity and lower-precision formats, which are essential for running 7B at Q4 parameter models and larger quantized variants efficiently. The 304W TDP is relatively high, necessitating a robust power supply, but the performance-per-watt during active inference remains competitive with modern prosumer cards.

The AMD Radeon RX 9070 XT VRAM for large language models allows for a versatile range of deployments. With 16GB of VRAM, the card hits the "sweet spot" for modern open-source models, fitting most 7B to 14B parameter models entirely on-chip without needing to offload layers to system RAM (which would catastrophically degrade performance).

The RX 9070 XT is tagged as Best for Computer Vision due to its high FP32 throughput. It excels at running Stable Diffusion XL (SDXL) and Flux.1 (schnell) locally. For vision-language models (VLMs) like LLaVA, the 16GB VRAM provides enough headroom to hold both the vision encoder and the language backbone simultaneously, facilitating rapid image-to-text analysis.

The RX 9070 XT is not a "set and forget" enterprise card; it is a tool for the proactive developer.

For those building local AI agents, the RX 9070 XT provides the necessary VRAM to keep an LLM resident in memory while leaving enough overhead for a vector database (like Chroma or Qdrant) and the agentic framework (LangChain/AutoGPT). The high INT8 TOPS count ensures that the "thinking" phase of the agent is handled with minimal latency.

If your goal is to run a private, local chatbot without subscription fees or data leakage, this is one of the best AMD GPUs for running AI models locally at the sub-$600 price point. It offers a significant upgrade over 8GB or 12GB cards, which often struggle with the context window requirements of modern models.

AMD’s ROCm (Radeon Open Compute) software stack is the primary gateway for AMD GPUs for AI development. The RX 9070 XT is well-supported in ROCm 6.x+, allowing developers to run PyTorch and TensorFlow workloads natively. While CUDA remains the industry standard, the gap is closing for inference tasks, making this card a viable choice for Linux-based AI workstations.

When choosing between the AMD Radeon RX 9070 XT vs RTX 5070, the decision hinges on the trade-off between software ecosystem and raw hardware value.

For best AI GPU for agent training, NVIDIA still holds the lead due to deeper integration with library optimizations like FlashAttention-2 and BitsAndBytes. However, for AI inference performance, especially in an era where GGUF and ExLlamaV2 formats are widely supported on AMD via ROCm and Vulkan backends, the RX 9070 XT is a high-value powerhouse for the 2025 hardware cycle.

Mixtral 8x7B InstructMistral AI | 46.7B(12.9B active) | SS | 45.3 tok/s | 11.4 GB | |

Gemma 4 26B-A4B ITGoogle | 26B(4B active) | SS | 46.8 tok/s | 11.0 GB | |

Qwen3.6 35B-A3BAlibaba | 35B(3B active) | SS | 60.4 tok/s | 8.5 GB | |

Qwen3.5-35B-A3BAlibaba | 35B(3B active) | SS | 60.4 tok/s | 8.5 GB | |

Llama 2 13B ChatMeta | 13B | SS | 60.9 tok/s | 8.5 GB | |

Qwen3-30B-A3BAlibaba | 30B(3B active) | SS | 95.7 tok/s | 5.4 GB | |

| 9B | SS | 85.7 tok/s | 6.0 GB | ||

| 8B | SS | 91.0 tok/s | 5.7 GB | ||

| Ad | |||||

Gemma 4 E4B ITGoogle | 4B | SS | 74.5 tok/s | 6.9 GB | |

Gemma 3 4B ITGoogle | 4B | SS | 74.5 tok/s | 6.9 GB | |

Mistral 7B InstructMistral AI | 7B | SS | 80.6 tok/s | 6.4 GB | |

Llama 2 7B ChatMeta | 7B | AA | 107.6 tok/s | 4.8 GB | |

| 8B | AA | 38.6 tok/s | 13.3 GB | ||

Gemma 4 E2B ITGoogle | 2B | AA | 138.9 tok/s | 3.7 GB | |

Qwen3.5-9BAlibaba | 9B | FF | 20.9 tok/s | 24.6 GB | |

Mistral Small 3 24BMistral AI | 24B | FF | 13.2 tok/s | 39.0 GB | |

| Ad | |||||

Qwen3.6-27BAlibaba | 27B | FF | 7.1 tok/s | 72.8 GB | |

Gemma 3 27B ITGoogle | 27B | FF | 11.8 tok/s | 43.8 GB | |

Qwen3.5-27BAlibaba | 27B | FF | 7.1 tok/s | 72.8 GB | |

Gemma 4 31B ITGoogle | 31B | FF | 6.3 tok/s | 82.0 GB | |

Qwen3-32BAlibaba | 32.8B | FF | 9.6 tok/s | 53.9 GB | |

Falcon 40B InstructTechnology Innovation Institute | 40B | FF | 21.2 tok/s | 24.4 GB | |

LLaMA 65BMeta | 65B | FF | 13.1 tok/s | 39.3 GB | |

Llama 2 70B ChatMeta | 70B | FF | 11.9 tok/s | 43.4 GB | |

| Ad | |||||

| 70B | FF | 11.3 tok/s | 45.7 GB | ||