Specs CalculatorROI CalculatorWorkstation BuilderModel TreemapGap TrackerStatus TrackerTools

Intel's third-generation AI accelerator competing with NVIDIA H100 for data center AI training and inference. Offers strong price/performance with open software stack.

Sized for production serving of 70B–200B class models at full or lightly-quantized precision. Overkill for a homelab; right call when the workload pays for itself in token volume. High TDP — plan for adequate cooling and a beefy PSU; not the right pick for compact desktops.

Generated from this product’s spec sheet. Editor reviews refine it over time.

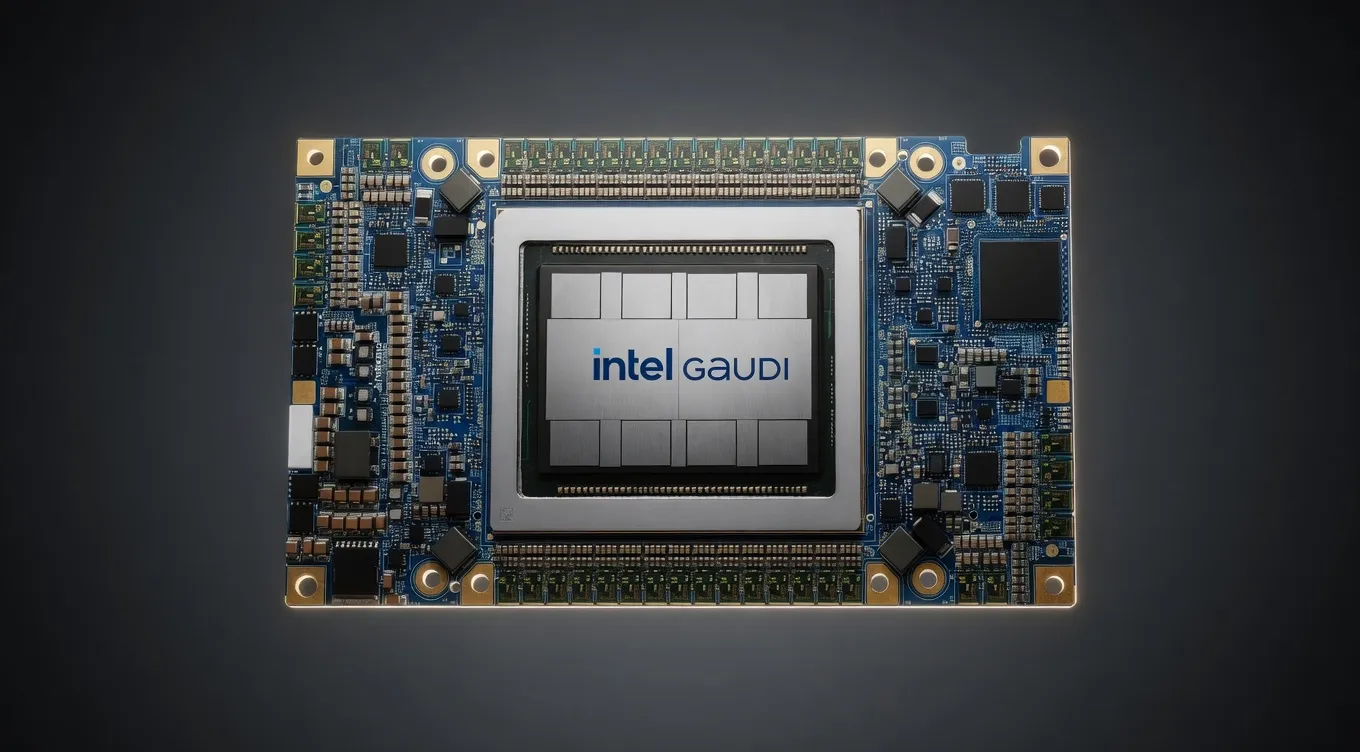

The Intel Gaudi 3 AI Accelerator represents Intel’s most aggressive move into the high-end data center market, designed specifically to challenge the dominance of the NVIDIA H100. Built on a 5nm TSMC process, the Gaudi 3 is a purpose-built AI architecture that prioritizes massive memory throughput and integrated networking. Unlike general-purpose GPUs, Gaudi 3 is engineered from the silicon up for the heavy matrix multiplication required by modern transformer architectures.

For engineers and teams building agentic workflows, the Gaudi 3 offers a compelling alternative to the CUDA ecosystem. It is positioned as a production-ready accelerator for both large-scale training and high-throughput inference. By focusing on open standards and a PyTorch-native software stack, Intel is targeting practitioners who need enterprise-grade performance without the "green tax" associated with market leaders. Whether deployed in OAM (Open Accelerator Module) for dense clusters or as a PCIe card for more flexible integration, the Gaudi 3 is designed to handle the most demanding LLM and multimodal workloads.

When evaluating the Intel Gaudi 3 AI Accelerator for AI, the most critical metrics are memory capacity and bandwidth. The Gaudi 3 features 128GB of HBM2e memory, providing a massive footprint for loading large parameter models without aggressive quantization. With a memory bandwidth of 3700 GB/s, this accelerator addresses the primary bottleneck in LLM inference: the speed at which weights can be moved from memory to the compute cores.

The architectural leap from Gaudi 2 to Gaudi 3 is significant. The chip delivers 1,835 TFLOPS of BF16 and FP8 performance, providing the raw compute necessary for both pre-training and fine-tuning.

The inclusion of 24 integrated 200Gb Ethernet ports is a strategic advantage for Intel hardware for AI development. It allows for seamless scaling across multiple nodes using standard networking protocols (RoCE) rather than proprietary interconnects. This makes the Gaudi 3 particularly effective for hardware for running Large models via multi-card parameter models, where inter-chip communication often becomes the primary performance ceiling.

The 128GB GPU for AI threshold is a game-changer for local deployment and private cloud environments. At 128GB, the Gaudi 3 can host a wide array of state-of-the-art models in high precision.

A single Gaudi 3 accelerator can comfortably fit the following:

For the largest models, such as the full DeepSeek-R1 or Llama 3.1 405B, the Gaudi 3 scales linearly across 8-card nodes. Because of the 3700 GB/s bandwidth and the integrated RoCE, practitioners can expect minimal latency penalties when distributing model layers across multiple accelerators. The 128GB capacity per card means a standard 8-card node provides 1TB of HBM2e, enough to run the world's largest open-weights models at FP8 or BF16 precision with massive concurrent user loads.

For most production AI inference performance scenarios, running models like Llama 3 70B at FP8 provides the best balance. The Gaudi 3 is optimized for FP8, allowing for near-lossless accuracy while doubling the effective throughput compared to 16-bit formats.

The Intel Gaudi 3 is not a consumer gaming card; it is a specialized tool for those building the next generation of AI services.

For organizations deploying local AI agents in 2025, the Gaudi 3 is a top-tier choice for inference-as-a-service. Its high VRAM capacity allows for large batch sizes, which is critical for maximizing "tokens per dollar" in a production environment. If you are building an agentic workflow that requires multiple LLM calls in parallel, the Gaudi 3's throughput ensures the pipeline doesn't stall.

The Intel Gaudi SDK integrates directly with PyTorch, meaning most Hugging Face-based workflows require minimal code changes. This makes it an excellent Intel hardware for AI development choice for teams who want to move from prototyping to production without rewriting their entire compute stack.

While much of the focus is on inference, the Gaudi 3 is a formidable training chip. The 128GB of VRAM is particularly useful for fine-tuning models on long-context datasets (e.g., 32k or 128k context windows), where memory consumption scales sharply with sequence length.

The most frequent comparison is Intel Gaudi 3 AI Accelerator vs NVIDIA H100.

Compared to the AMD Instinct MI300X, which offers more VRAM (192GB), the Gaudi 3 focuses on a balanced ratio of compute-to-networking, making it a "leaner" choice for high-speed inference clusters where networking throughput is as important as raw memory capacity.

For those searching for the best intel hardware for running AI models locally or in a private cloud, the Gaudi 3 stands as the clear flagship. It eliminates the VRAM bottlenecks that plague consumer-grade hardware, making it the definitive choice for professional-grade AI deployment.

GLM-4.6Z.ai | 355B(32B active) | SS | 42.4 tok/s | 70.3 GB | |

Mistral Large 3 675BMistral AI | 675B(41B active) | SS | 44.9 tok/s | 66.3 GB | |

DeepSeek-V3DeepSeek | 671B(37B active) | SS | 49.8 tok/s | 59.8 GB | |

DeepSeek-R1DeepSeek | 671B(37B active) | SS | 49.8 tok/s | 59.8 GB | |

DeepSeek-V3.1DeepSeek | 671B(37B active) | SS | 49.8 tok/s | 59.8 GB | |

DeepSeek-V3.2DeepSeek | 685B(37B active) | SS | 49.8 tok/s | 59.8 GB | |

Qwen3.6-27BAlibaba | 27B | SS | 40.9 tok/s | 72.8 GB | |

Qwen3.5-27BAlibaba | 27B | SS | 40.9 tok/s | 72.8 GB | |

| Ad | |||||

GLM-4.7Z.ai | 358B(32B active) | SS | 56.6 tok/s | 52.6 GB | |

GLM-4.5Z.ai | 355B(32B active) | SS | 57.5 tok/s | 51.8 GB | |

Kimi K2 InstructMoonshot AI | 1000B(32B active) | SS | 57.5 tok/s | 51.8 GB | |

Qwen3-32BAlibaba | 32.8B | SS | 55.2 tok/s | 53.9 GB | |

| 70B | SS | 65.2 tok/s | 45.7 GB | ||

Qwen3.5-397B-A17BAlibaba | 397B(17B active) | SS | 64.7 tok/s | 46.0 GB | |

Qwen 3.5 OmniAlibaba | 397B(17B active) | SS | 65.9 tok/s | 45.2 GB | |

Mixtral 8x22B InstructMistral AI | 141B(39B active) | SS | 68.4 tok/s | 43.6 GB | |

| Ad | |||||

Llama 2 70B ChatMeta | 70B | SS | 68.6 tok/s | 43.4 GB | |

Kimi K2 Instruct 0905Moonshot AI | 1000B(32B active) | SS | 35.2 tok/s | 84.6 GB | |

Kimi K2 ThinkingMoonshot AI | 1000B(32B active) | SS | 35.2 tok/s | 84.6 GB | |

Kimi K2.5Moonshot AI | 1000B(32B active) | SS | 35.2 tok/s | 84.6 GB | |

Gemma 4 31B ITGoogle | 31B | SS | 36.3 tok/s | 82.0 GB | |

Kimi K2.6Moonshot AI | 1000B(32B active) | SS | 34.6 tok/s | 86.2 GB | |

Qwen3-235B-A22BAlibaba | 235B(22B active) | SS | 82.0 tok/s | 36.3 GB | |

Gemma 3 27B ITGoogle | 27B | SS | 68.0 tok/s | 43.8 GB | |

| Ad | |||||

GLM-5Z.ai | 744B(40B active) | SS | 34.0 tok/s | 87.7 GB | |